Episode Details

Back to Episodes

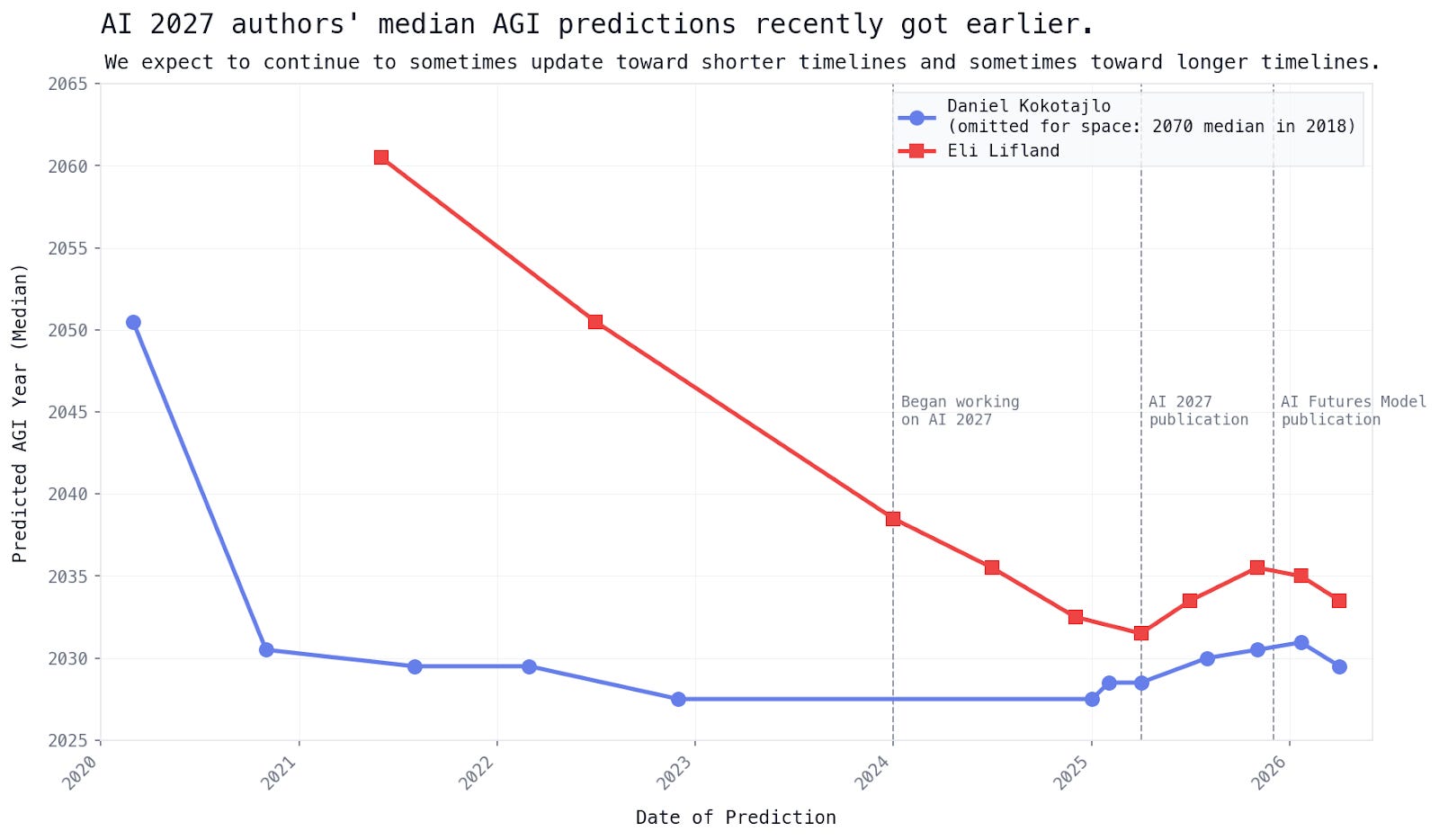

The People Who Got AI Right – And the Rest of Us Who Didn't Believe Them

Description

This week I read two texts that sent me out for a walk afterwards. I do that fairly often anyway, but this time I went even though the sun was blazing outside and all my Greek neighbors were asleep, saving their energy for the evening. The first piece is from the AI Futures Project team, which works on predicting when humans will stop programming. The second is by journalist Dylan Matthews, who – eleven years on – is apologizing to people he once dismissed as cranks. I’ll summarize them here, somewhat mercilessly. Anyone already afraid of the future is probably better off deleting this email now.

The conference where weirdos stood at the lectern

In August 2015, the Effective Altruists organized a conference called EA Global. Among the speakers were Nick Bostrom (author of Superintelligence), Stuart Russell (a legend of computer science), Nate Soares (today the author of If Anyone Builds It Everyone Dies), and a still-fairly-reasonable Elon Musk. The topic: how artificial intelligence will sweep us all away.

Among the attendees was Dylan Matthews, a journalist for Vox. He spoke there with a young engineer from Google named Chris Olah. With a philosophy PhD student named Amanda Askell. And with a programmer from PayPal named Buck Shlegeris.

Dylan left the conference convinced that a promising movement, one that could be saving lives in Africa and chickens in cages, was about to destroy itself over a speculative fear of a technology that didn’t yet exist. He then wrote a rather tough article in Vox, framing that fear as proof that the movement was running away from real problems.

This was August 2015. The Transformer had not yet been invented (and let me just note that one of the people involved in that breakthrough was our own Tomáš Mikolov), and OpenAI had not yet been founded. Nothing in the world resembled today’s ChatGPT.

Eleven years later, Dylan writes: I should have looked more carefully.

Chris Olah, in the meantime, helped lay the foundation of our present, named the entire field of mechanistic interpretability, and co-founded Anthropic. Amanda Askell works at the same company and is directly responsible for Claude’s personality. Buck Shlegeris runs Redwood Research, one of the most serious technical AI safety labs outside the walls of the big firms.

Three people Dylan once treated as oddballs are today holding a piece of the global technological future in their hands.

They missed the mark

And here is where it starts to get fun. Fun in the dark sense of the word.

Dylan in his article mentions Leopold Aschenbrenner, a former OpenAI researcher who in 2024 wrote a series of essays under the title Situational Awareness. In them he predicted that by 2026, $520 billion would flow into AI infrastructure. Everyone tapped their head and called him crazy. Real-world investment is now estimated at $650 to $700 billion.

We undershot it again. The reality is wilder than the wild prediction.

Similarly, Ajeya Cotra and Peter Wildeford predicted at the end of 2024 what would happen to AI in 2025. Dylan writes: they were very accurate – and where they were wrong, they were wrong in underestimating the revenues of AI companies. Meaning, they didn’t err in expecting AI to grow more slowly. They erred in failing to dare to predict how fast it would actually grow.

Anthropic? Annual revenue running at the $10 billion level. Tenfold growth every year.

Listen Now

Love PodBriefly?

If you like Podbriefly.com, please consider donating to support the ongoing development.

Support Us