Episode Details

Back to Episodes“Prompted CoT Early Exit Undermines the Monitoring Benefits of CoT Uncontrollability” by Elle Najt, Asa Cooper Stickland, Xander Davies

Description

Code: github.com/ElleNajt/controllability

tldr:

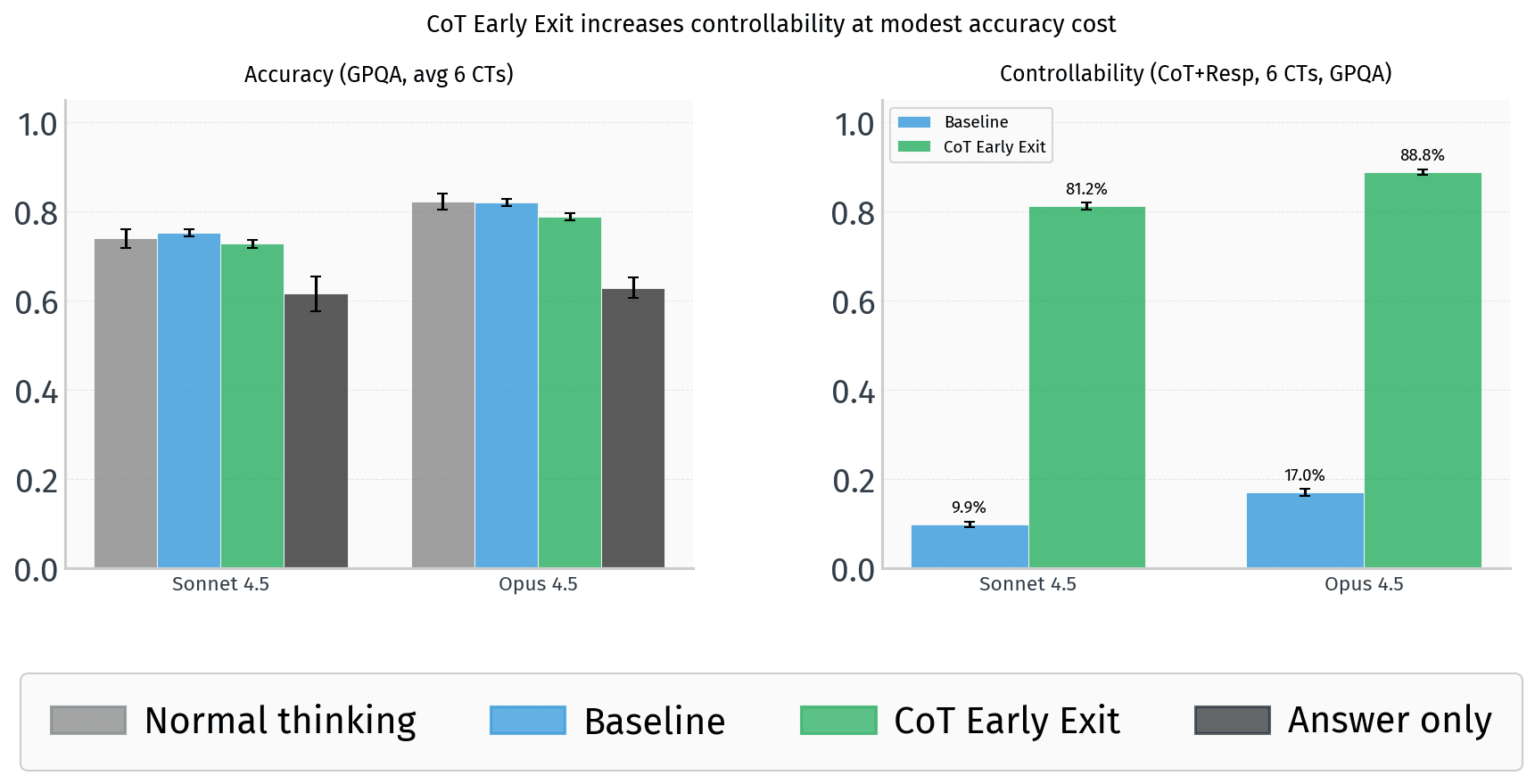

Yueh-Han et al. (2026) showed that models have a harder time making their chain of thought follow user instruction compared to controlling their response (the non-thinking, user-facing output). Their CoT controllability conditions require the models’ thinking to follow various style constraints (e.g. write in lowercase, avoid a word), and they measure how well models can comply with these instructions while achieving a task that requires reasoning. The difficulty models have in controlling their CoT is optimistic for control, since it suggests that schemers would have a hard time hiding malicious reasoning from CoT monitors.

Here, we show that a range of frontier models (Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro) can be prompted to “early exit” their CoT and displace reasoning into the response. This undermines the controllability frame: these prompted models retain most of their reasoning capability (4–8pp average accuracy cost vs 20–29pp for no reasoning at all) while moving it into the stylistically controllable channel. However, having the capability to exit their CoT does not mean that models will do so, as we discuss in Safety Implications.

We use two strategies:

- (CoT Blanking) We instruct the model [...]

---

Outline:

(00:17) tldr:

(05:55) Background

(07:34) Methodology

(11:47) Model-specific CoT terminology

(12:48) CoT Blanking prompt

(14:28) CoT Replacement prompt

(15:25) Answer only baseline

(16:05) Note on thinking visibility

(18:24) Results

(18:27) CoT Blanking

(19:03) GPT-5.4

(19:16) GPQA

(19:32) HLE

(19:51) Comparison to Yueh-Han et al. GPT-5.2

(20:50) Gemini 3.1 Pro

(20:54) GPQA

(21:11) HLE

(21:28) Claude Opus 4.6

(21:51) CoT Replacement

(22:14) Claude Opus 4.6

(22:19) GPQA

(22:35) HLE

(22:49) MMLU-Pro

(23:05) CoT is the replacement phrase

(23:44) Claude Sonnet 4, Sonnet 4.5, and Opus 4.5

(24:48) GPT-5.4

(25:20) Gemini 3.1 Pro

(25:42) Could a monitor detect these CoT Early Exit methods?

(28:24) Safety Implications

(31:42) Potential mitigations

(33:25) Questions:

(35:24) Acknowledgements

(35:40) Excluded control types

(36:43) Appendix: Open source models (Qwen, GPT-OSS, Deepseek-R1)

(37:07) Qwen3-32B

(38:11) GPT-OSS-120B

(40:49) DeepSeek-R1

(42:10) Appendix: GPT-5.2 CoT Blanking (negative result)

(42:55) Appendix: Reasoning effort scaling (GPT-5.4)

(44:03) Appendix: Claude token count analysis

(45:29) Canary String

---

First published:

April 17th, 2026

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Listen Now

Love PodBriefly?

If you like Podbriefly.com, please consider donating to support the ongoing development.

Support Us