Episode Details

Back to Episodes“Consent-Based RL: Letting Models Endorse Their Own Training Updates” by Logan Riggs

Description

AKA scalable oversight of value drift

TL;DR LLMs could be aligned but then corrupted through RL, instrumentally converging on deep consequentialism. If LLMs are sufficiently aligned and can properly oversee their training updates, we they can prevent this.

SOTA models can arguably be considered ~aligned,[1] but this isn't my main concern. It's not when models are trained on human data that messes up (I mean, we can still mess that part up), it's when you try to go above the human level. Models like AlphaGo learned through self-play, not human imitation.

RL selects for strategies through the reward function, but we can't design perfect reward functions for complex settings[2]. However, we can use LLMs to be the reward function instead, if they're aligned well enough by default. This leads us to:

Consent Based RL

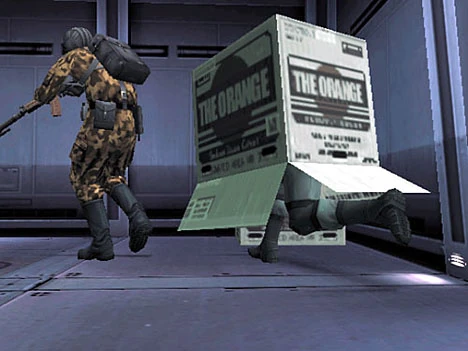

Imagine you're being trained to make deliveries as fast as possible in an RL environment, but we need exploration, you know? So your actions are sampled, until you end up cutting across the curb, running over pedestrians, and delivering it faster. Then your brain is forcibly re-wired to more likely do that.

That would suck.

I personally would like to see what actions [...]

---

Outline:

(01:04) Consent Based RL

(01:42) Value Drift

(02:33) Ontological Drift

(03:58) Train on Prediction

(04:26) LLMs Know Their Own Concepts

(05:13) Cost

The original text contained 3 footnotes which were omitted from this narration.

---

First published:

April 17th, 2026

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.