Episode Details

Back to Episodes[Linkpost] “You can only build safe ASI if ASI is globally banned” by Connor Leahy

Description

Sometimes people make various suggestions that we should simply build "safe" artificial Superintelligence (ASI), rather than the presumably "unsafe" kind.[1]

There are various flavors of “safe” people suggest.

- Sometimes they suggest building “aligned” ASI: You have a full agentic autonomous god-like ASI running around, but it really really loves you and definitely will do the right thing.

- Sometimes they suggest we should simply build “tool AI” or “non-agentic” AI.

- Sometimes they have even more exotic, or more obviously-stupid ideas.

Now I could argue at lengths about why this is astronomically harder than people think it is, why their various proposals are almost universally unworkable, why even attempting this is insanely immoral[2], but that's not the main point I want to make.

Instead, I want to make a simpler point:

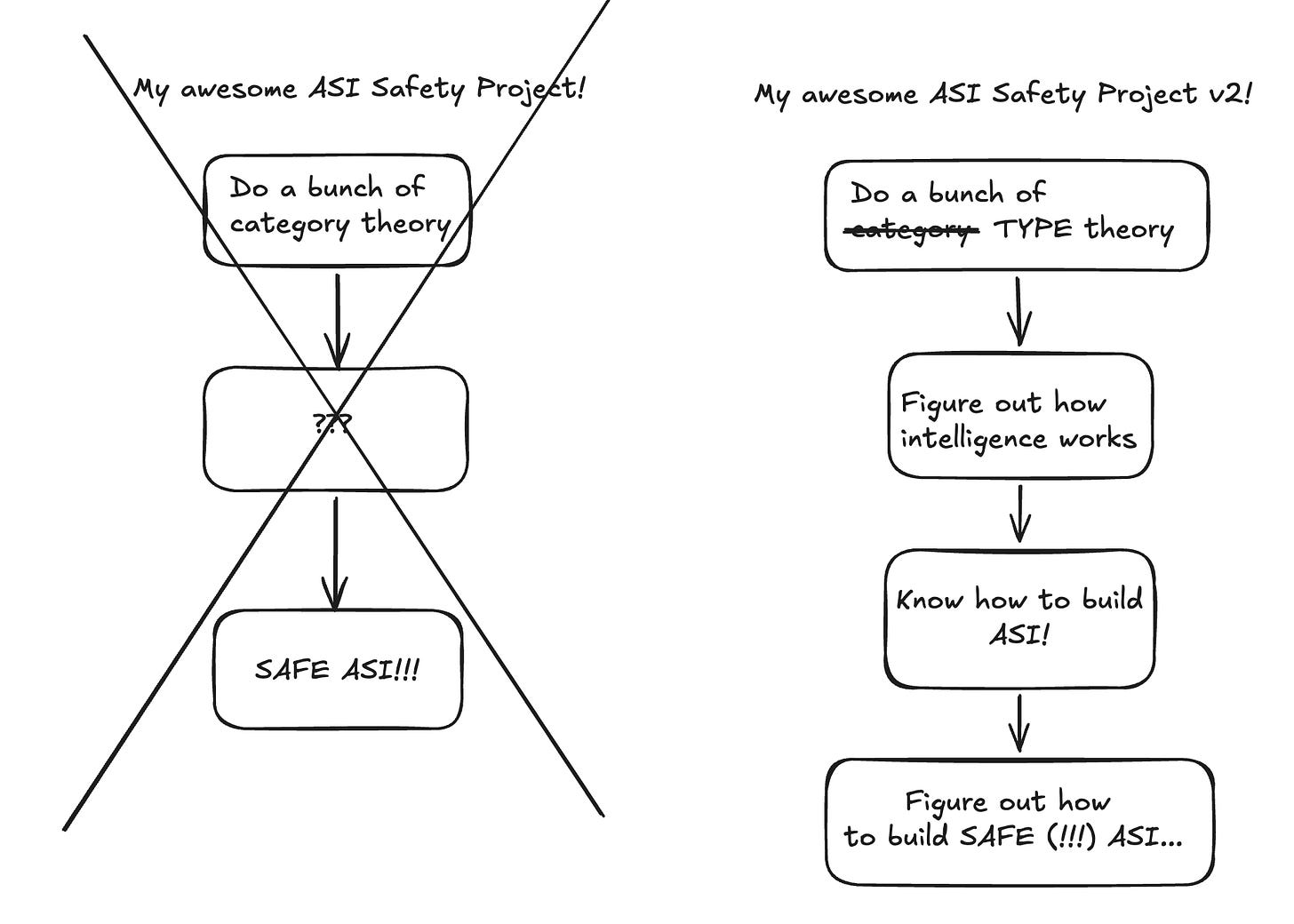

Assume you have a research agenda that, if executed, results in a ASI-tier powerful software system that you can “control”.[3]

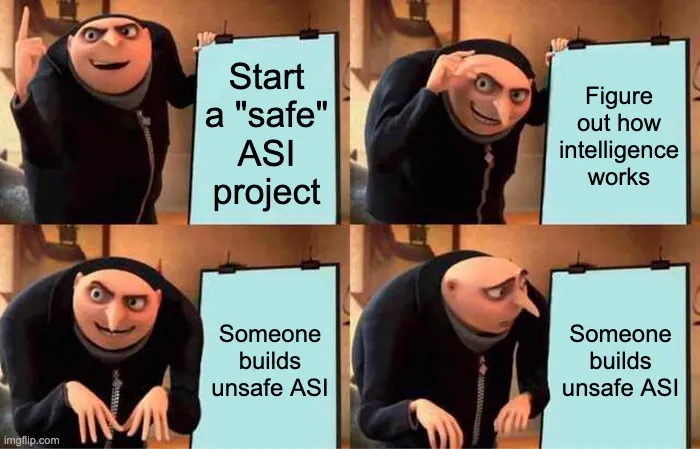

Punchline: On your way to figuring out how to build controllable ASI, you will have figured out how to build unsafe ASI, because unsafe ASI is vastly easier to build than controlled ASI, and is on the same tech path.

You can’t build a controlled [...]

The original text contained 4 footnotes which were omitted from this narration.

---

First published:

April 16th, 2026

Linkpost URL:

https://www.ettf.land/p/you-can-only-build-safe-asi-if-asi

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.