Episode Details

Back to Episodes“From personas to intentions: towards a science of motivations for AI models” by David Africa, Jacob Pfau

Description

TLDR:

- Behavior-only descriptions are useful, but insufficient for aligning advanced models with high assurance.

- Two models can look equally aligned on ordinary prompts while being driven by very different underlying motivations; this difference may only show up in rare but crucial situations.

- So persona research should aim to infer motivational structure: the latent drives, values, and priority relations that generate context-specific intentions and behavior.

- Doing this well likely requires interventional data, model internals, and possibly self-explanations, as opposed to only IID behavioral samples.

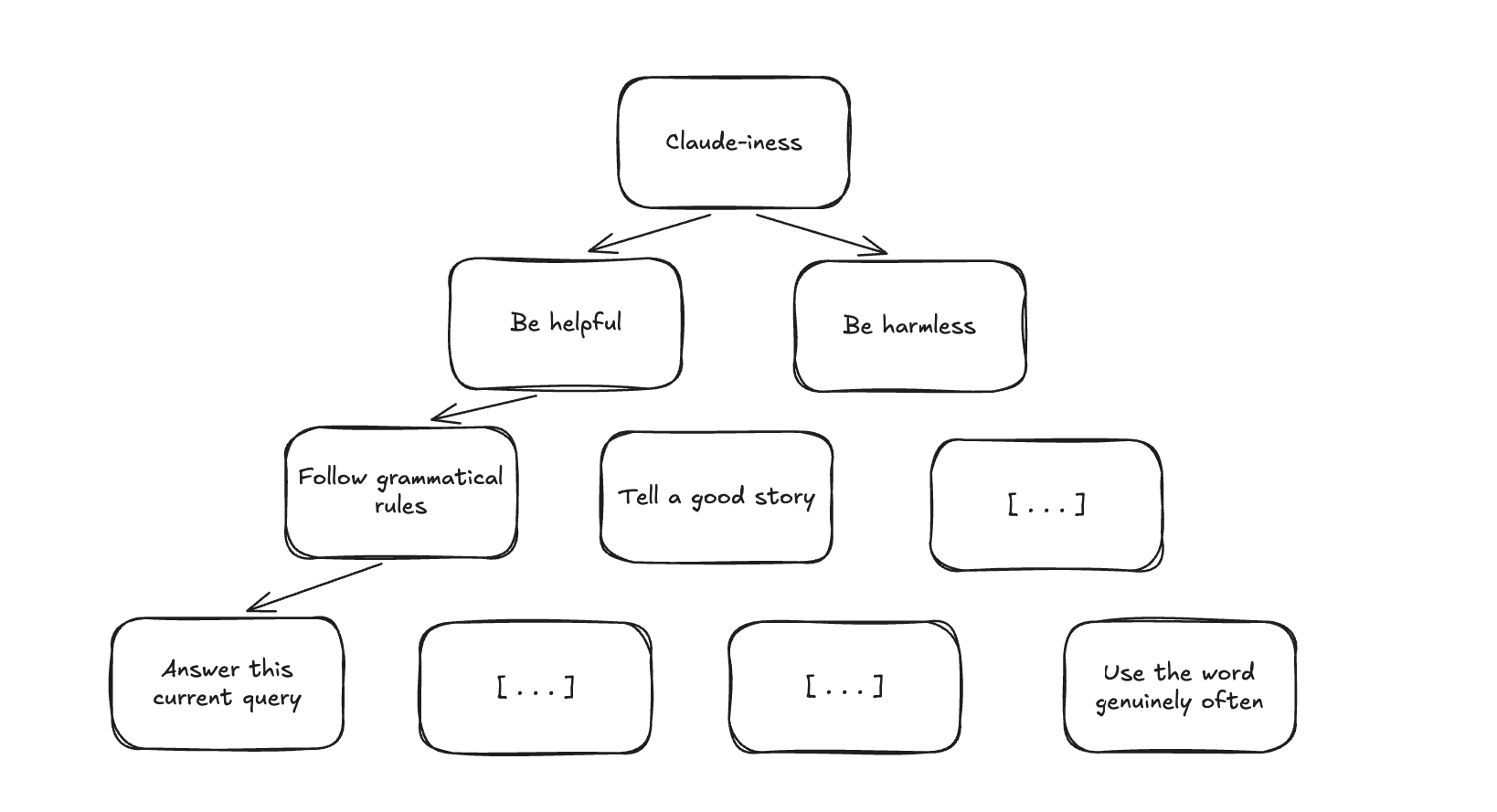

- One concrete direction we propose is inverse constitution learning: reconstructing the model's implicit hierarchy of priorities from behavior, explanations, and internal traces.

Introduction

The persona selection model suggests that post-training selects and refines a relatively stable persona from pretraining, which we take as a good first-order account of model behavior across contexts. But for alignment, we often want a second-order account: not only which persona is selected, but what motivational structure underlies the persona's context-specific intentions.

Why behavior is not enough. The reason for this is simple: behavior often underdetermines intention. Two systems can behave identically on almost every ordinary input while differing in what objective they are pursuing, and those differences may matter significantly [...]

---

Outline:

(01:12) Introduction

(03:47) Towards a science of model intentions

(08:16) Where to from here

---

First published:

April 14th, 2026

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.