Episode Details

Back to Episodes“Alternative AI Forecasting Methods” by Scott Alexander

Description

Forecasts based on benchmarks and time horizons have failed to produce a consensus timeline for the arrival of AGI. We supplement these with several alternative methods.

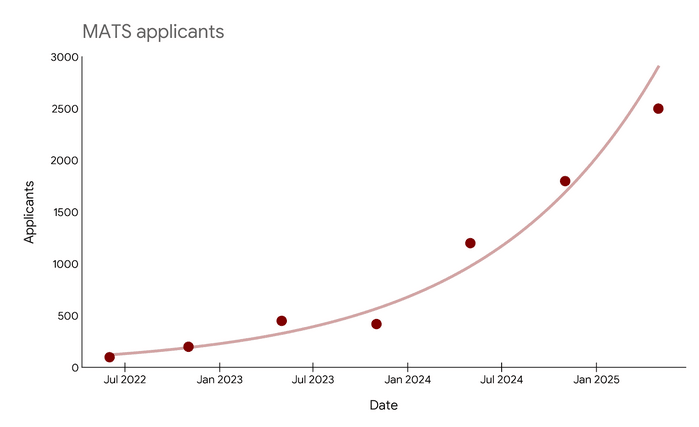

1: MATS Applications

AGI - artificial intelligence capable of performing every task - is by definition cognitively capable of putting all humans out of work. However, there are certain edge cases where human employment might persist for other reasons. As the majority of jobs get automated, we expect newly unemployed workers to migrate to these edge case industries.

The clearest case for an industry which cannot be completely automated after AGI is AI safety research. The advent of AGI will likely be followed shortly by superintelligence, and this superintelligence will require alignment work beyond that which produced AGI. Although AGI will be intellectually capable of the technical aspects of this work, we may be uncertain whether it fully understands human values, and we may not trust it to do the work alone. Therefore, as every other industry is automated, AI safety will become one of the few remaining fields that absorbs human labor. Conservatively, we predict that within three years of AGI, 10% of the human population - about 800 million people - [...]

---

Outline:

(00:20) 1: MATS Applications

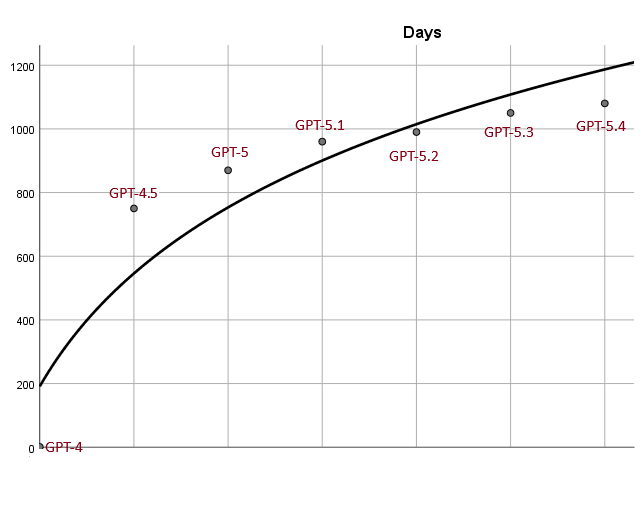

(02:16) 2: Model Release Cadence

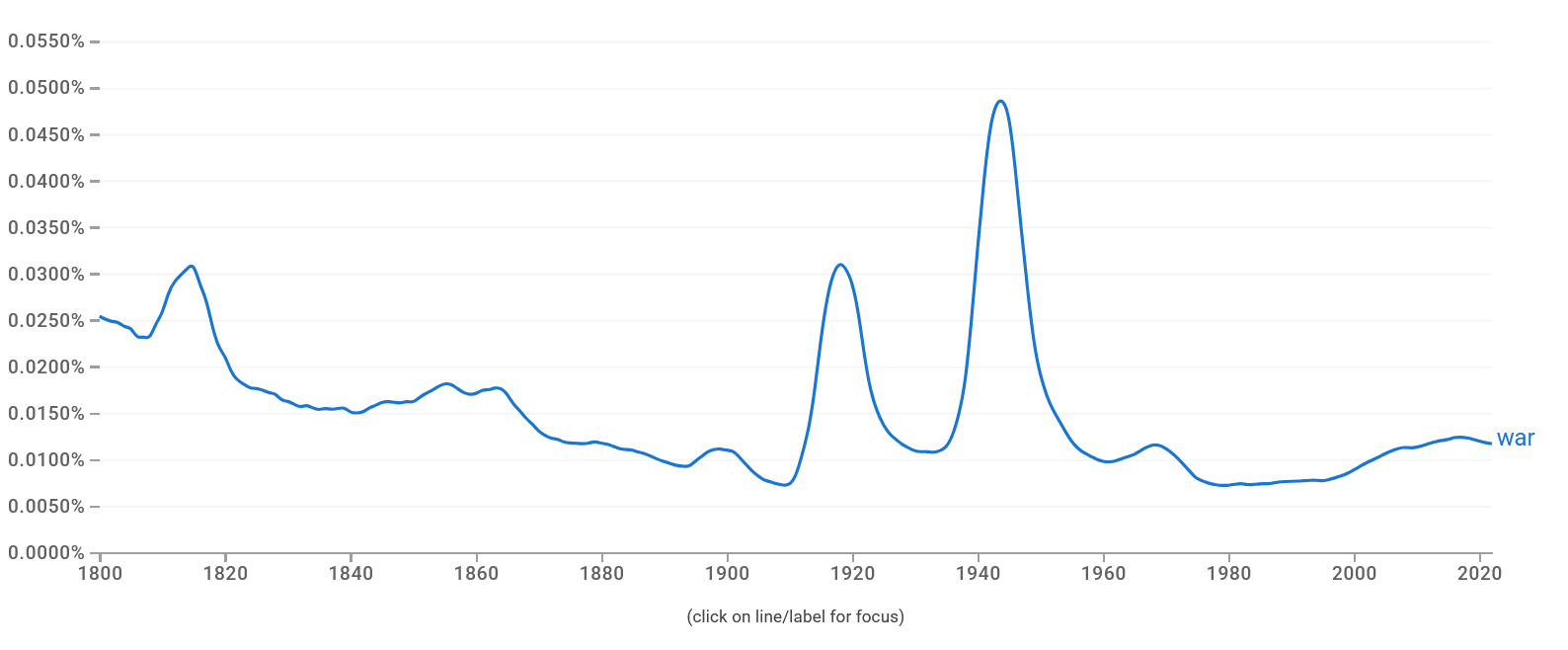

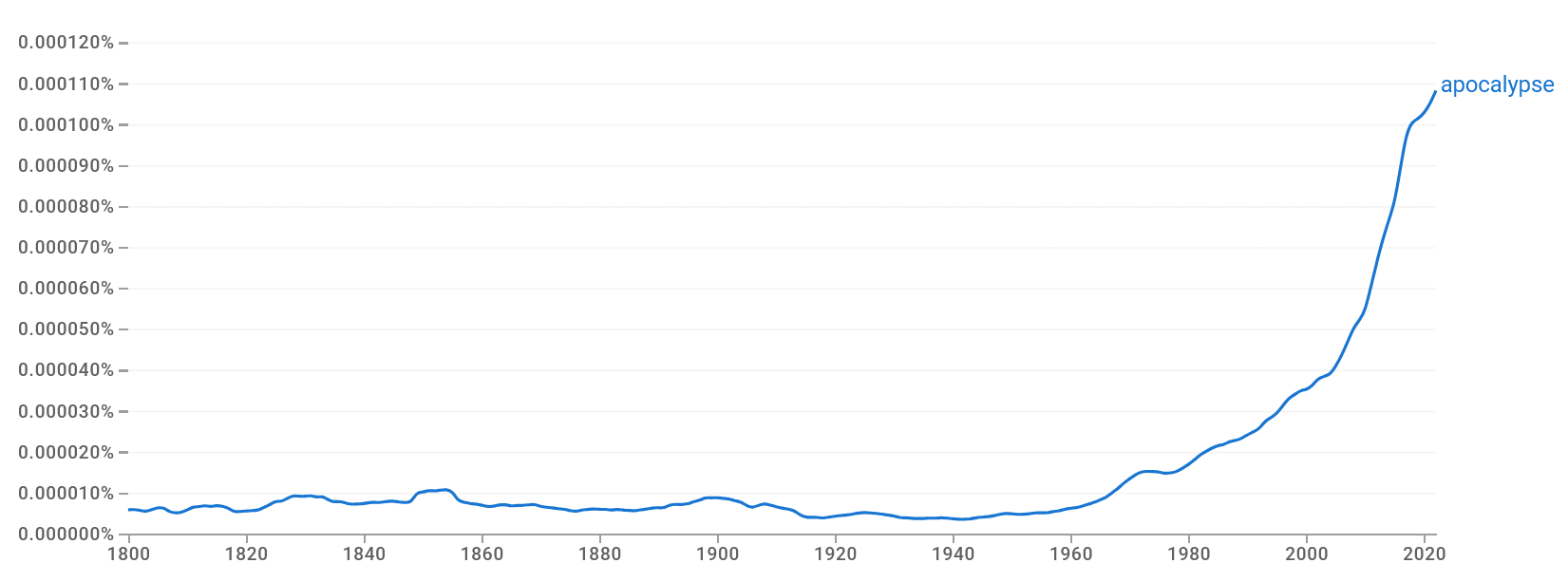

(03:39) 3: Google Ngram Mentions Of Apocalypse

---

First published:

April 1st, 2026

Source:

https://forum.effectivealtruism.org/posts/5273CTxx878FkfHf8/alternative-ai-forecasting-methods

---

Narrated by TYPE III AUDIO.

---

Listen Now

Love PodBriefly?

If you like Podbriefly.com, please consider donating to support the ongoing development.

Support Us