Episode Details

Back to Episodes“I used to think aligned ASI would be good for all sentient beings; now I don’t know what to think” by MichaelDickens

Description

Cross-posted from my website.

Epistemic status: Speculating with no central thesis. This post is less of an argument and more of a meditation.

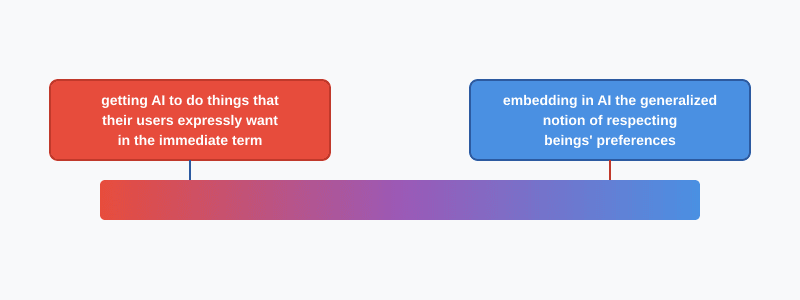

A decade ago, before there was a visible path to AGI and before AI alignment was a significant research field, I figured the solution to the alignment problem would look something like Coherent Extrapolated Volition. I figured we'd find a way to get the AI to internalize human values. I had problems with this approach (why only human values?), but I still felt reasonably confident that the coherent extrapolation of human values would include concern for the welfare of all sentient beings. The CEV-aligned AI would recognize that factory farming is wrong, and that wild animal suffering is a big problem.

Today, the dominant research paradigms in AI alignment have nothing to do with CEV, and I don't know what to think.

Regarding the promisingness of today's popular research paradigms, my beliefs are aligned (heh) with those of most MIRI researchers: namely, I don't think they have promise. For example, see On how various plans miss the hard bits of the alignment challenge by Nate Soares. I'm not an alignment researcher, but [...]

The original text contained 8 footnotes which were omitted from this narration.

---

First published:

March 25th, 2026

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.