Episode Details

Back to Episodes“Survey of AI safety leaders on x-risk, AGI timelines, and resource allocation (Feb 2026)” by OllieRodriguez

Description

This report was mostly written by Claude Opus 4.6. We manually checked all claims and didn’t find any errors.

Summary

- We summarise the results of a survey carried out before the 2026 Summit on Existential Security. Respondents were Summit attendees: leaders and key thinkers in the x-risk and AI safety communities.

- The survey asked attendees about their estimates of existential risk, AGI timelines, and resource allocation priorities.

-

Survey data comes from the 59 respondents who consented to their answers being shared publicly.[1] Data was collected in February 2026.

X-risk and timelines

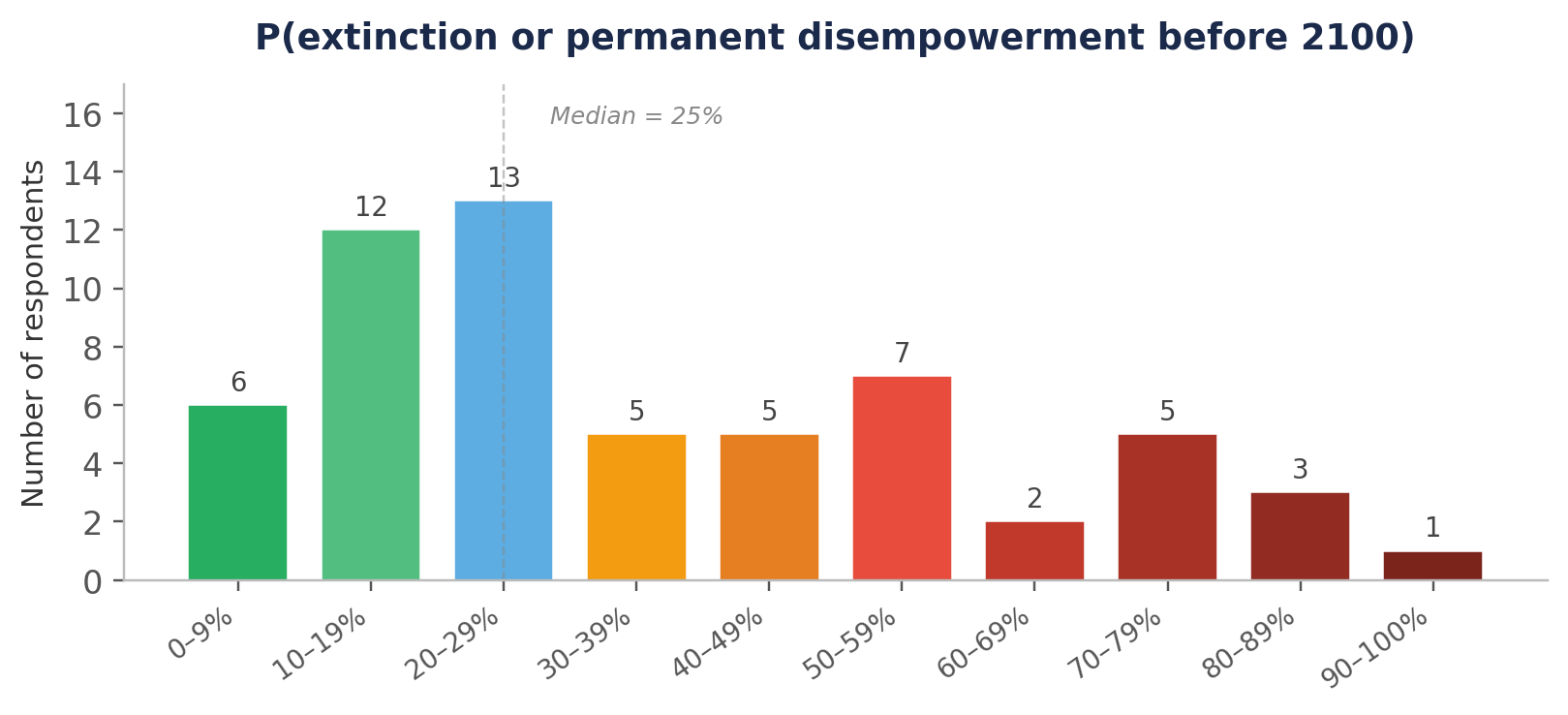

Key resultsProbability of human extinction or permanent human disempowerment before 2100Median: 25%

Mean: 34%

50% chance of AGIMedian: 2033

Mean: 2034

25% chance of AGI (n=48)Median: 2030

Mean: 2030

Assigned ≥50% chance of AGI by 203022% of respondentsAssigned ≥50% chance of AGI by 203573% of respondentsWe defined AGI as:

An AI system (or collection of systems) that can fully automate the vast majority (>90%) of roles in the 2025 economy. A job is fully automatable when machines could be built to carry out the job better and more cheaply than human workers. Think feasibility, not adoption.

Resource allocation

- The strongest consensus in resource allocation is [...]

---

Outline:

(00:23) Summary

(01:00) X-risk and timelines

(01:39) Resource allocation

(02:25) Areas of debate and consensus at the event

(02:51) Existential risk estimates

(03:07) Summary statistics

(03:25) Distribution

(04:27) Notable comments

(05:52) AGI timelines

(06:35) Summary statistics

(06:59) Distribution of AGI estimates

(07:54) Notable comments

(08:46) Relationship between timelines and risk

(09:25) Resource allocation priorities

(09:51) Overall preferences

(10:29) Misaligned AI views by timeline subgroup

(11:05) Notable comments

(12:04) Sub-field priorities

(12:55) Notable comments

(13:59) Areas of consensus and debate at the event

(14:08) Areas of broad consensus

(15:09) Areas of active debate

---

First published:

March 25th, 2026

---

Narrated by TYPE III AUDIO.

---

Love PodBriefly?

If you like Podbriefly.com, please consider donating to support the ongoing development.

Support Us