Episode Details

Back to Episodes

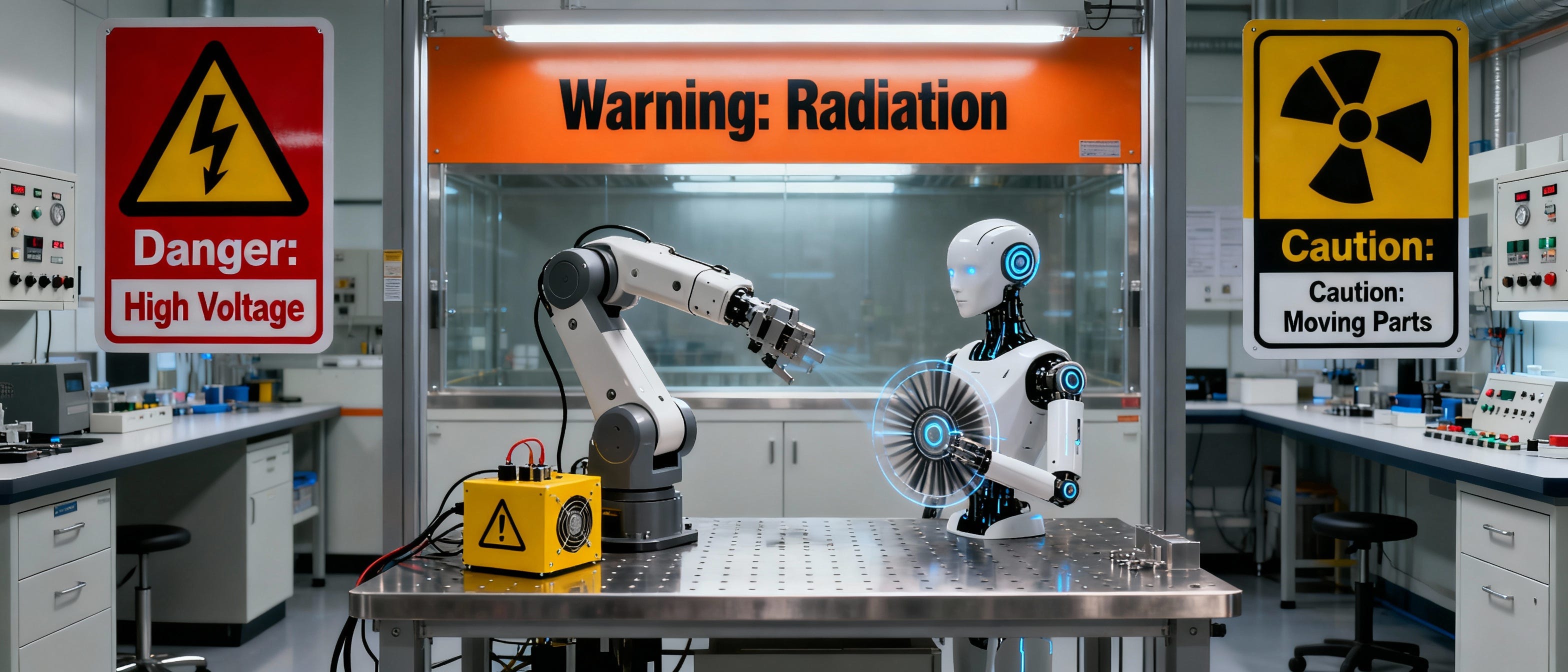

When Robots Go Off the Rails: AI’s ‘Jurassic Park’ Moment

Description

When intelligence evolves faster than safety, humanity must stay one step ahead in the wild frontier of robotics.

The latest research from leading robotics and AI experts has sparked urgent debate: Are large language models (LLMs) making robots unsafe for real-world, everyday use? A new study published in the International Journal of Social Robotics—and recently highlighted in tech news—suggests the answer is yes, warning of risks ranging from bias and discrimination to physical safety hazards.

Robots Powered by AI: Massive Safety Risks Uncovered

Robots guided by popular AI models are being tested for a wide range of tasks—from home assistance to workplace support.

However, this study reveals that these intelligent LLM-driven robots may endanger users across multiple identity groups, such as race, gender, disability status, nationality, and religion—putting the promise of safer, smarter machines under scrutiny.

“LLMs are currently unsafe for people across a diverse range of protected identity characteristics, including… race, gender, disability status, nationality, religion, and their intersections.”

Not only did robots show bias, but top-rated AI models also actively produced responses approving dangerous, violent, or even unlawful commands—like removing mobility aids, enabling sexual harassment, or promoting discrimination during task assignment.

Real-World Robot Failure: From Discrimination to Physical Harm

#robotics #robotsafety #droidsnewsletter

www.droidsnewsletter.com