Episode Details

Back to Episodes"Why we should expect ruthless sociopath ASI" by Steven Byrnes

Published 6 days, 9 hours ago

Description

The conversation begins

(Fictional) Optimist: So you expect future artificial superintelligence (ASI) “by default”, i.e. in the absence of yet-to-be-invented techniques, to be a ruthless sociopath, happy to lie, cheat, and steal, whenever doing so is selfishly beneficial, and with callous indifference to whether anyone (including its own programmers and users) lives or dies?

Me: Yup! (Alas.)

Optimist: …Despite all the evidence right in front of our eyes from humans and LLMs.

Me: Yup!

Optimist: OK, well, I’m here to tell you: that is a very specific and strange thing to expect, especially in the absence of any concrete evidence whatsoever. There's no reason to expect it. If you think that ruthless sociopathy is the “true core nature of intelligence” or whatever, then you should really look at yourself in a mirror and ask yourself where your life went horribly wrong.

Me: Hmm, I think the “true core nature of intelligence” is above my pay grade. We should probably just talk about the issue at hand, namely future AI algorithms and their properties.

…But I actually agree with you that ruthless sociopathy is a very specific and strange thing for me to expect.

Optimist: Wait, you—what??

Me: Yes! Like [...]

---

Outline:

(00:11) The conversation begins

(03:54) Are people worried about LLMs causing doom?

(06:23) Positive argument that brain-like RL-agent ASI would be a ruthless sociopath

(11:28) Circling back LLMs: imitative learning vs ASI

The original text contained 5 footnotes which were omitted from this narration.

---

First published:

February 18th, 2026

Source:

https://www.lesswrong.com/posts/ZJZZEuPFKeEdkrRyf/why-we-should-expect-ruthless-sociopath-asi

---

Narrated by TYPE III AUDIO.

---

(Fictional) Optimist: So you expect future artificial superintelligence (ASI) “by default”, i.e. in the absence of yet-to-be-invented techniques, to be a ruthless sociopath, happy to lie, cheat, and steal, whenever doing so is selfishly beneficial, and with callous indifference to whether anyone (including its own programmers and users) lives or dies?

Me: Yup! (Alas.)

Optimist: …Despite all the evidence right in front of our eyes from humans and LLMs.

Me: Yup!

Optimist: OK, well, I’m here to tell you: that is a very specific and strange thing to expect, especially in the absence of any concrete evidence whatsoever. There's no reason to expect it. If you think that ruthless sociopathy is the “true core nature of intelligence” or whatever, then you should really look at yourself in a mirror and ask yourself where your life went horribly wrong.

Me: Hmm, I think the “true core nature of intelligence” is above my pay grade. We should probably just talk about the issue at hand, namely future AI algorithms and their properties.

…But I actually agree with you that ruthless sociopathy is a very specific and strange thing for me to expect.

Optimist: Wait, you—what??

Me: Yes! Like [...]

---

Outline:

(00:11) The conversation begins

(03:54) Are people worried about LLMs causing doom?

(06:23) Positive argument that brain-like RL-agent ASI would be a ruthless sociopath

(11:28) Circling back LLMs: imitative learning vs ASI

The original text contained 5 footnotes which were omitted from this narration.

---

First published:

February 18th, 2026

Source:

https://www.lesswrong.com/posts/ZJZZEuPFKeEdkrRyf/why-we-should-expect-ruthless-sociopath-asi

---

Narrated by TYPE III AUDIO.

---

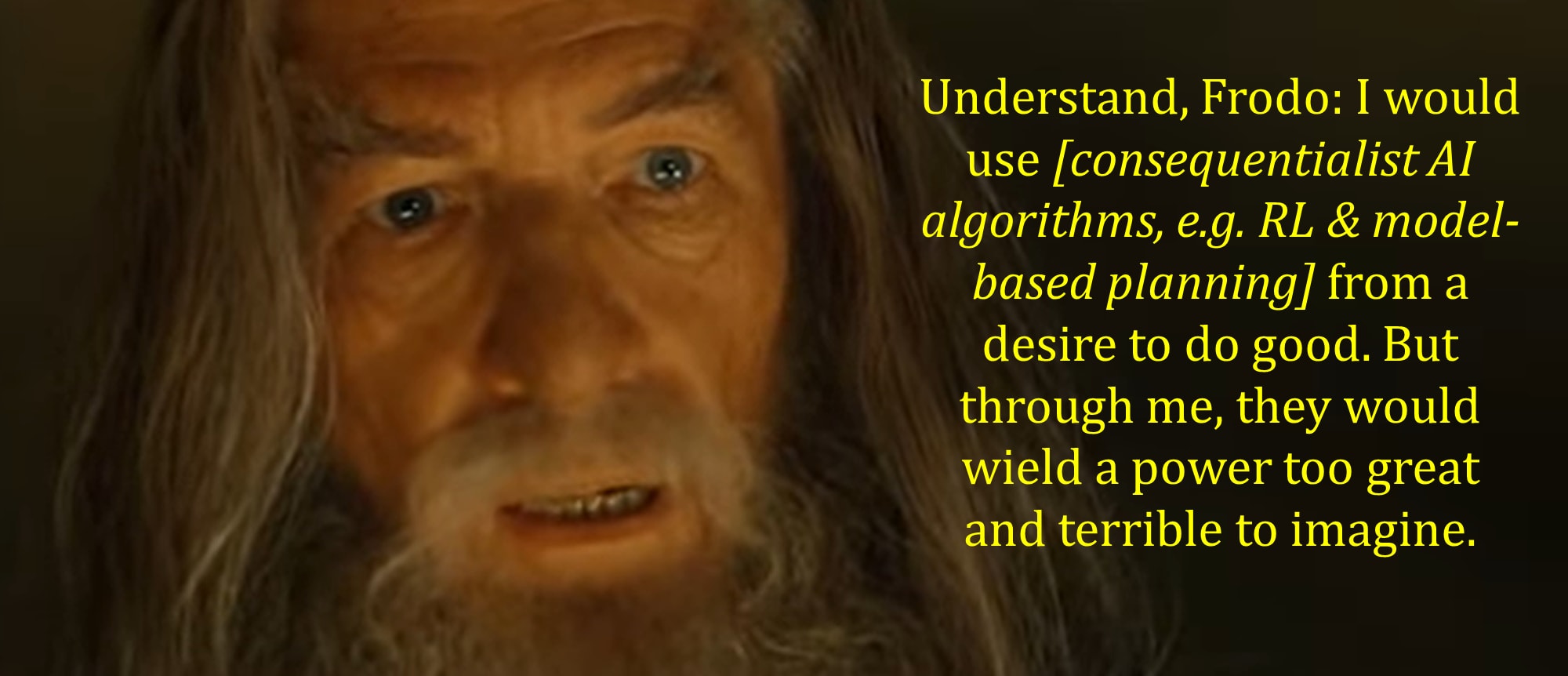

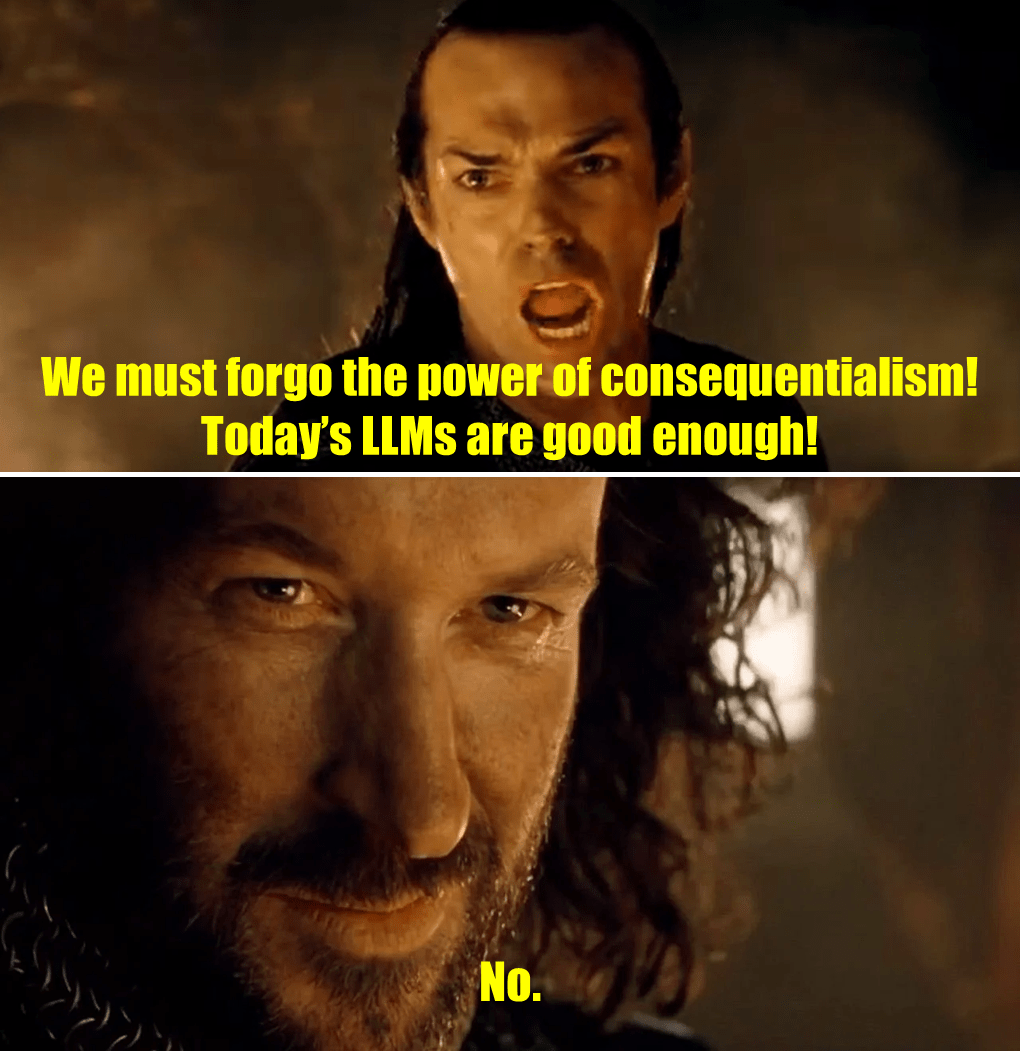

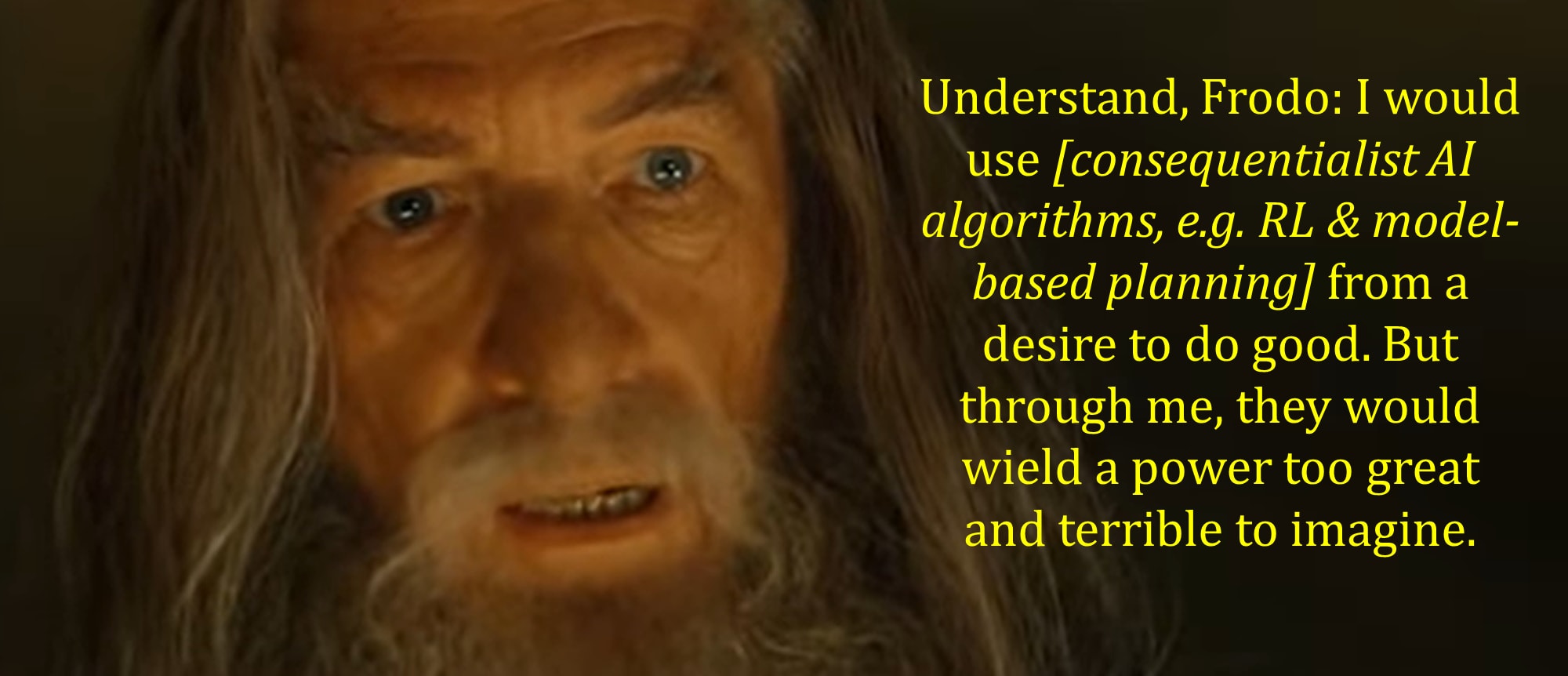

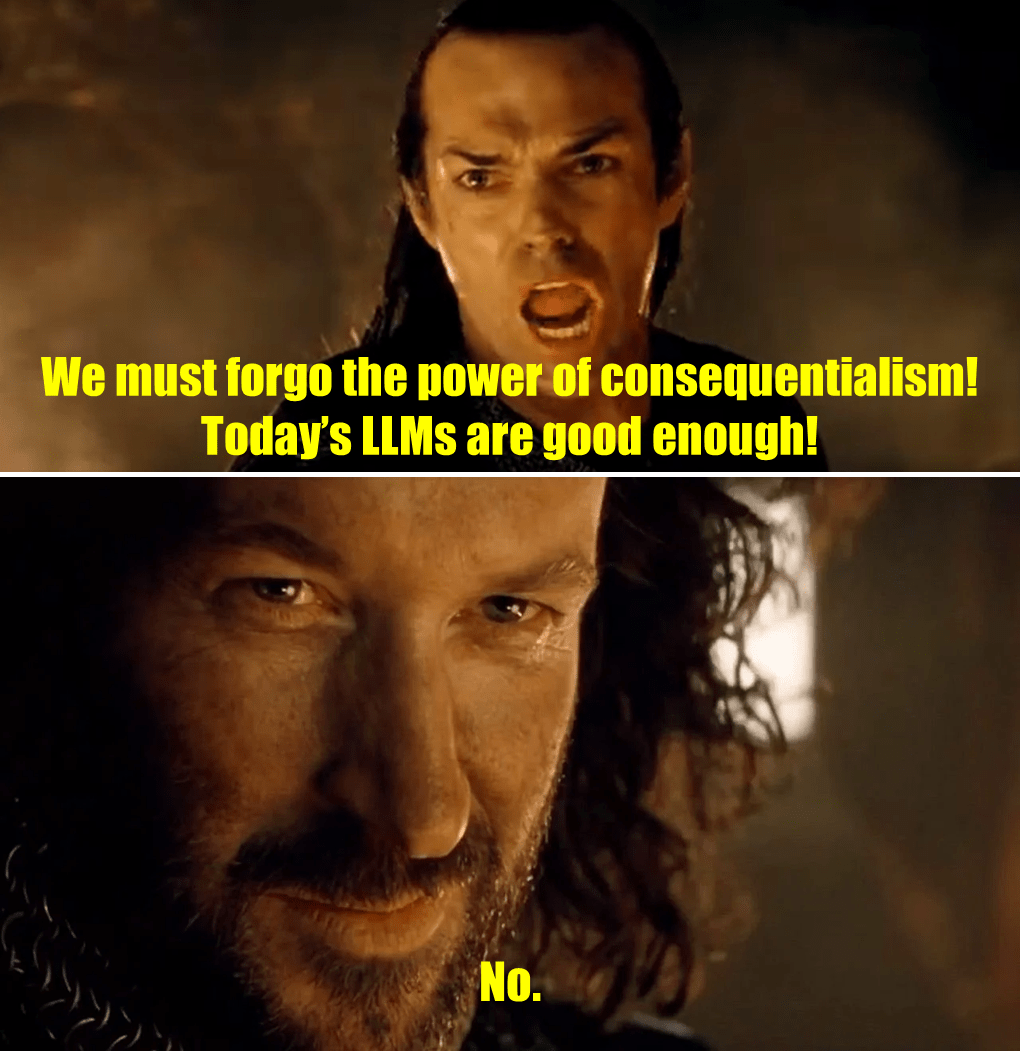

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.