Episode Details

Back to Episodes

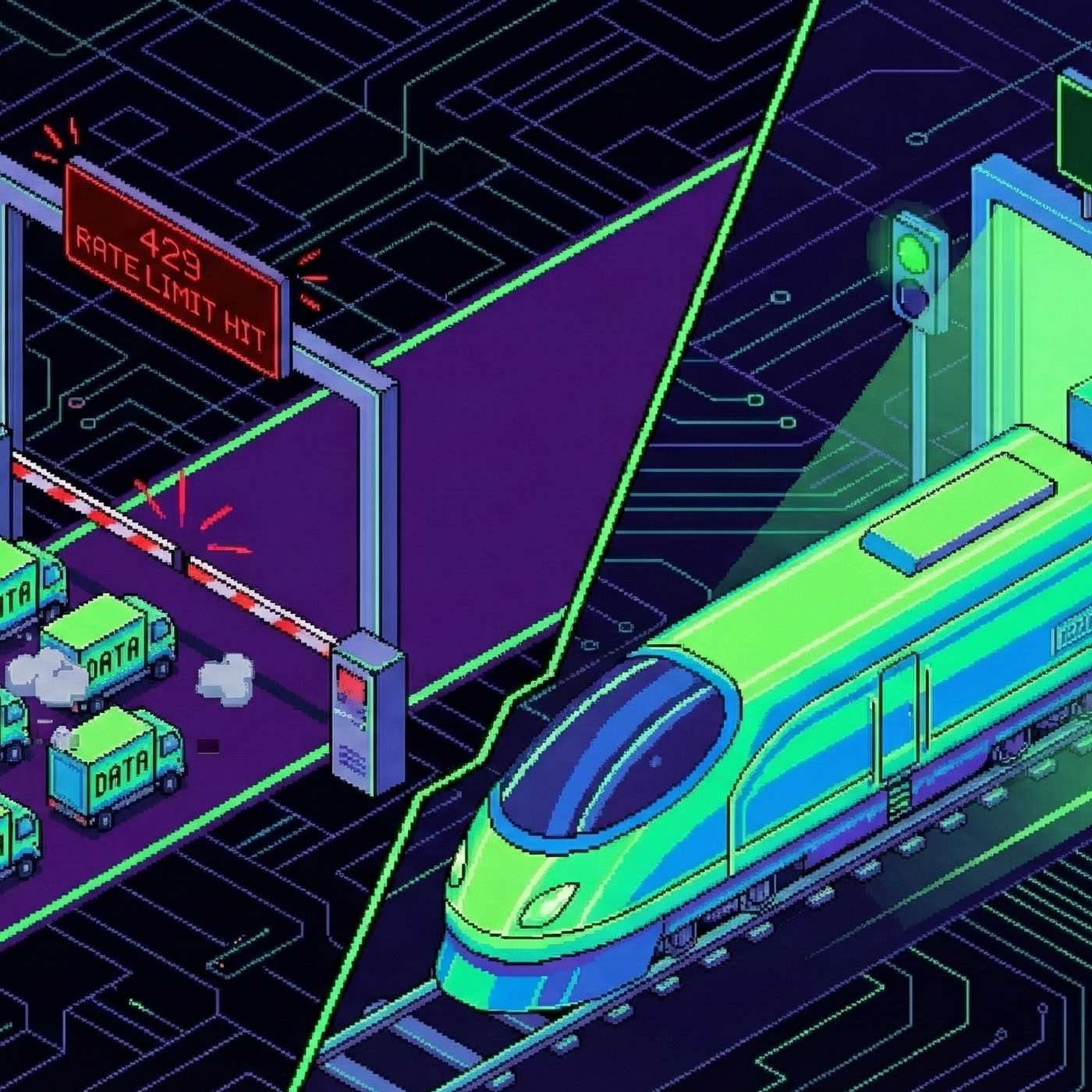

Prompt Rate Limits & Batching: How to Stop Your LLM API From Melting Down

Description

This story was originally published on HackerNoon at: https://hackernoon.com/prompt-rate-limits-and-batching-how-to-stop-your-llm-api-from-melting-down.

LLM APIs have real speed limits. Learn how tokens, rate limits, and batching affect scale—and how to avoid costly 429 errors in production.

Check more stories related to tech-stories at: https://hackernoon.com/c/tech-stories.

You can also check exclusive content about #llm-rate-limits, #prompt-engineering, #batching-llm-requests, #api-throttling, #llm-scaling-strategies, #token-per-minute-limits, #handling-http-429-errors, #llm-production-architecture, and more.

This story was written by: @superorange0707. Learn more about this writer by checking @superorange0707's about page,

and for more stories, please visit hackernoon.com.

LLM rate limits are unavoidable, but most failures come from poor prompt design, bursty traffic, and naive request patterns. This guide explains how to reduce token usage, pace requests, batch safely, and build LLM systems that scale without constant 429 errors.