Episode Details

Back to Episodes

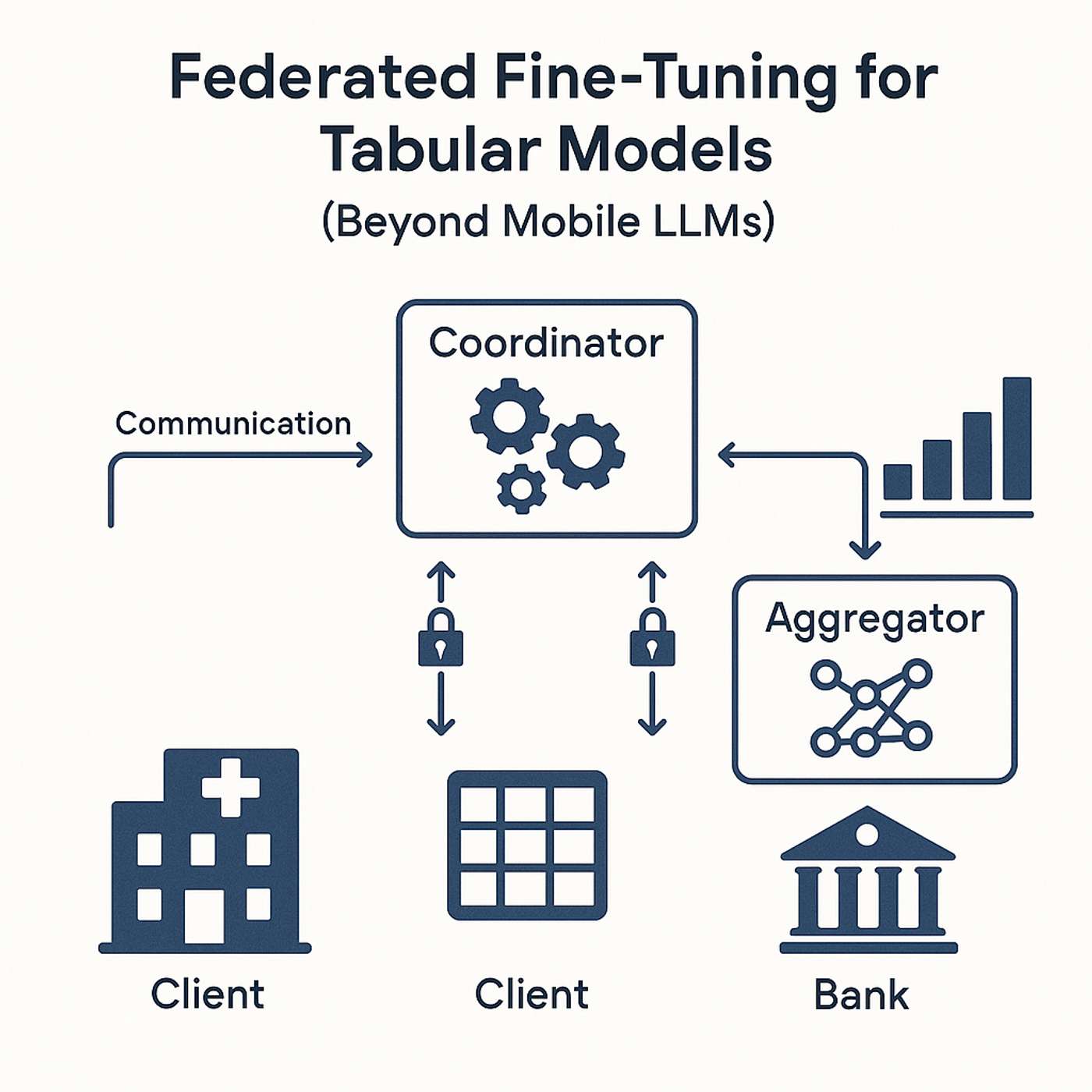

Federated Fine-Tuning for Tabular Models (Beyond Mobile LLMs)

Description

This story was originally published on HackerNoon at: https://hackernoon.com/federated-fine-tuning-for-tabular-models-beyond-mobile-llms.

Federated fine-tuning methods for secure, private and scalable tabular model training in regulated sectors.

Check more stories related to machine-learning at: https://hackernoon.com/c/machine-learning.

You can also check exclusive content about #llms, #fine-tuning-llms, #ai, #artificial-intelligence, #artificial-intelligence-trends, #policy, #ai-policy, #good-company, and more.

This story was written by: @sanya_kapoor. Learn more about this writer by checking @sanya_kapoor's about page,

and for more stories, please visit hackernoon.com.

Federated Pipelines for XGBoost and TabNet are a way to federate data models. They can be made practical with the right abstractions.