Podcast Episode Details

Back to Podcast Episodes

Contra Dwarkesh on Continual Learning

Dwarkesh Patel’s now well-read post on why he is extending his AI timelines focuses on the idea of continual learning. If you ask me, what we have already is AGI, so the core question is: Is continual learning a bottleneck on AI progress?

In this post, I argue that continual learning as he describes it actually doesn’t matter for the trajectory of AI progress that we are on. Continual learning will eventually be solved, but in the sort of way that a new type of AI will emerge from it, rather than continuing to refine what it means to host ever more powerful LLM-based systems.

Continual learning is the ultimate algorithmic nerd snipe for AI researchers, when in reality all we need to do is keep scaling systems and we’ll get something indistinguishable from how humans do it, for free.

To start, here’s the core of the Dwarkesh piece as a refresher for what he means by continual learning.

Sometimes people say that even if all AI progress totally stopped, the systems of today would still be far more economically transformative than the internet. I disagree. I think the LLMs of today are magical. But the reason that the Fortune 500 aren’t using them to transform their workflows isn’t because the management is too stodgy. Rather, I think it’s genuinely hard to get normal humanlike labor out of LLMs. And this has to do with some fundamental capabilities these models lack.

I like to think I’m “AI forward” here at the Dwarkesh Podcast. I’ve probably spent over a hundred hours trying to build little LLM tools for my post production setup. And the experience of trying to get them to be useful has extended my timelines. I’ll try to get the LLMs to rewrite autogenerated transcripts for readability the way a human would. Or I’ll try to get them to identify clips from the transcript to tweet out. Sometimes I’ll try to get them to co-write an essay with me, passage by passage. These are simple, self contained, short horizon, language in-language out tasks - the kinds of assignments that should be dead center in the LLMs’ repertoire. And they're 5/10 at them. Don’t get me wrong, that’s impressive.

But the fundamental problem is that LLMs don’t get better over time the way a human would. The lack of continual learning is a huge huge problem. The LLM baseline at many tasks might be higher than an average human's. But there’s no way to give a model high level feedback. You’re stuck with the abilities you get out of the box. You can keep messing around with the system prompt. In practice this just doesn’t produce anything even close to the kind of learning and improvement that human employees experience.

The core issue I have with this argument is the dream of making the LLMs we’re building today look more like humans. In many ways I’m surprised that Dwarkesh and other very AGI-focused AI researchers or commentators believe this — it’s the same root argument that AI critics use when they say AI models don’t reason. The goal to make AI more human is constraining the technological progress to a potentially impossible degree.

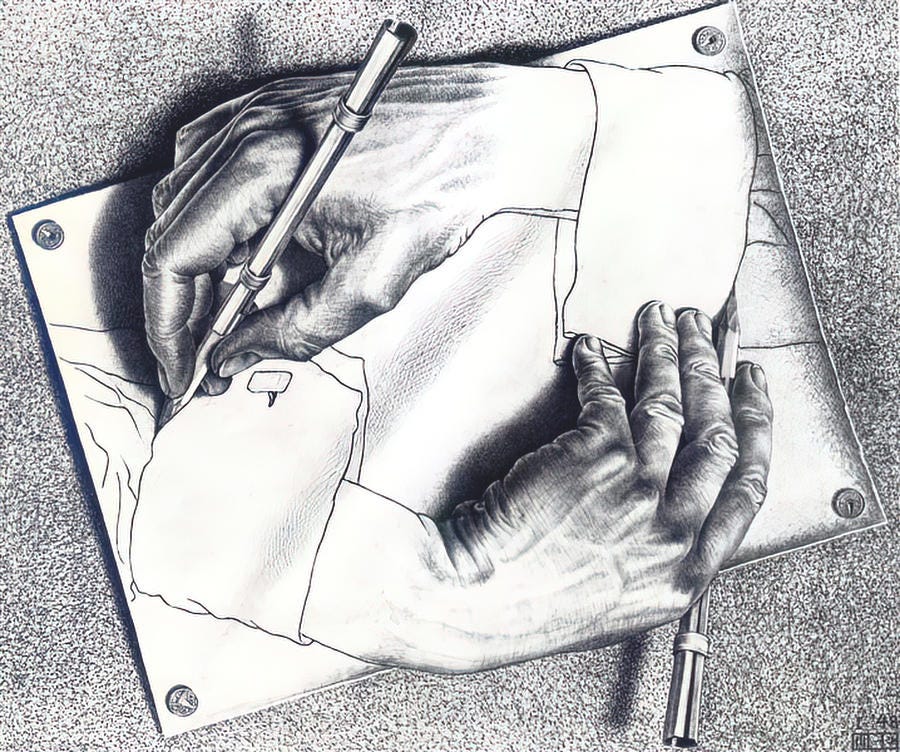

Human intelligence has long been the inspiration for AI, but we have long surpassed it being the mirror we look to for inspiration. Now the industry is all in on the expensive path to make the best language models it possibly can. We’re no longer trying to build the bird, we’re trying to transition the Wright Brothers’ invention into the 737 in the shortest time frame possible.

To put it succinctly. My argument very much rhymes with some of my past writing.

Do language models reason like humans? No. Do language models reason? Yes.

Will language model systems continually learn like humans? No.Will language model s

Published on 1 month ago