Episode Details

Back to Episodes

📆 ThursdAI - Aug 14 - A week with GPT5, OSS world models, VLMs in OSS, Tiny Gemma & more AI news

Description

Hey everyone, Alex here 👋

Last week, I tried to test GPT-5 and got really surprisingly bad results, but it turns out, as you'll see below, it's partly because they had a bug in the router, and partly because ... well, the router itself! See below for an introduction, written by GPT-5, it's actually not bad?

Last week was a whirlwind. We live‑streamed GPT‑5’s “birthday,” ran long, and then promptly spent the next seven days poking every corner of the new router‑driven universe.

This week looked quieter on the surface, but it actually delivered a ton: two open‑source world models you can drive in real time, a lean vision‑language model built for edge devices, a 4B local search assistant that tops Perplexity Pro on SimpleQA, a base model “extraction” from GPT‑OSS that reverses alignment, fresh memory features landing across the big labs, and a practical prompting guide to unlock GPT‑5’s reasoning reliably.

We also had Alan Dao join to talk about Jan‑v1 and what it takes to train a small model that consistently finds the right answers on the open web—locally.

Not bad eh? Much better than last time 👏 Ok let's dive in, a lot to talk about in this "chill" AI week (show notes at the end as always) first open source, and then GPT-5 reactions and then... world models!

00:00 Introduction and Welcome

00:33 Host Introductions and Health Updates

01:26 Recap of Last Week's AI News

01:46 Discussion on GPT-5 and Prompt Techniques

03:03 World Models and Genie 3

03:28 Interview with Alan Dow from Jan

04:59 Open Source AI Releases

06:55 Big Companies and APIs

10:14 New Features and Tools

14:09 Liquid Vision Language Model

26:18 Focusing on the Task at Hand

26:18 Reinforcement Learning and Reward Functions

26:35 Offline AI and Privacy

27:13 Web Retrieval and API Integration

30:34 Breaking News: New AI Models

30:41 Google's New Model: Gemma 3

33:53 Meta's Dino E3: Advancements in Computer Vision

38:50 Open Source Model Updates

45:56 Weights & Biases: New Features and Updates

51:32 GPT-5: A Week in Review

55:12 Community Outcry Over AI Model Changes

56:06 OpenAI's Response to User Feedback

56:38 Emotional Attachment to AI Models

57:52 GPT-5's Performance in Coding and Writing

59:55 Challenges with GPT-5's Custom Instructions

01:01:45 New Prompting Techniques for GPT-5

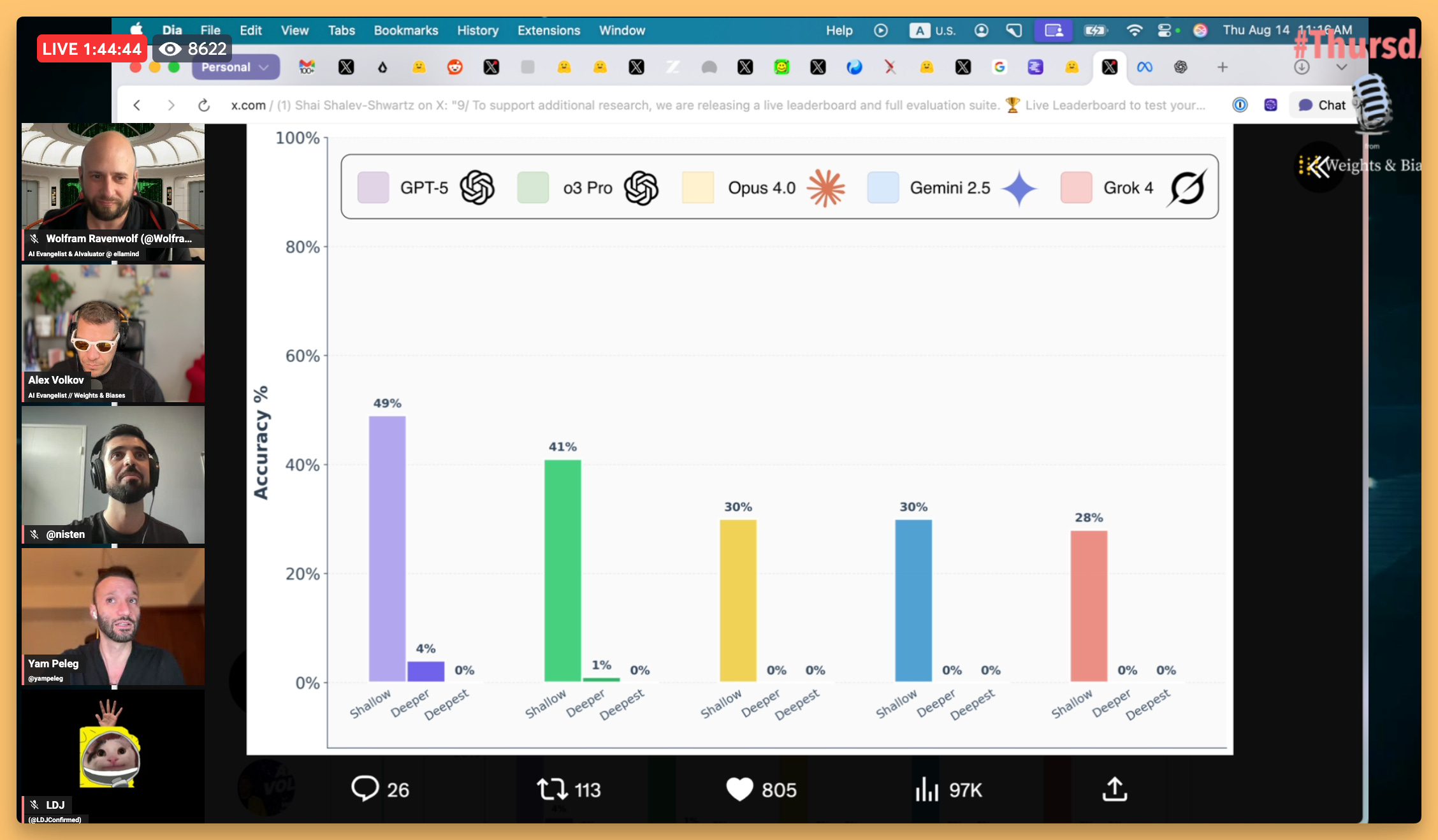

01:04:10 Evaluating GPT-5's Reasoning Capabilities

01:20:01 Open Source World Models and Video Generation

01:27:54 Conclusion and Future Expectations

Open Source AI

We've had quite a lot of Open Source this week on the show, including a breaking news from the Gemma team!

Liquid AI's drops LFM2-VL (X, blog, HF)

Let's kick things off with our friends at Liquid AI who released LFM2-VL - their new vision-language models coming in at a tiny 440M and 1.6B parameters.

Liquid folks continue to surprise with speedy, mobile device ready models, that run 2X faster vs top VLM peers. With a native 512x512 resolution (which breaks any image size into 512 smart tiles) and an OCRBench of 74, this tiny model beats SmolVLM2 while being half the size. We've chatted with Maxime from liquid about LFM2 back in july, and it's great to see they are making them multimodal as well with the same efficiency gains!

Zhipu (z.ai) unleashes GLM-4.5V - 106B VLM (X,

Listen Now

Love PodBriefly?

If you like Podbriefly.com, please consider donating to support the ongoing development.

Support Us