Episode Details

Back to Episodes

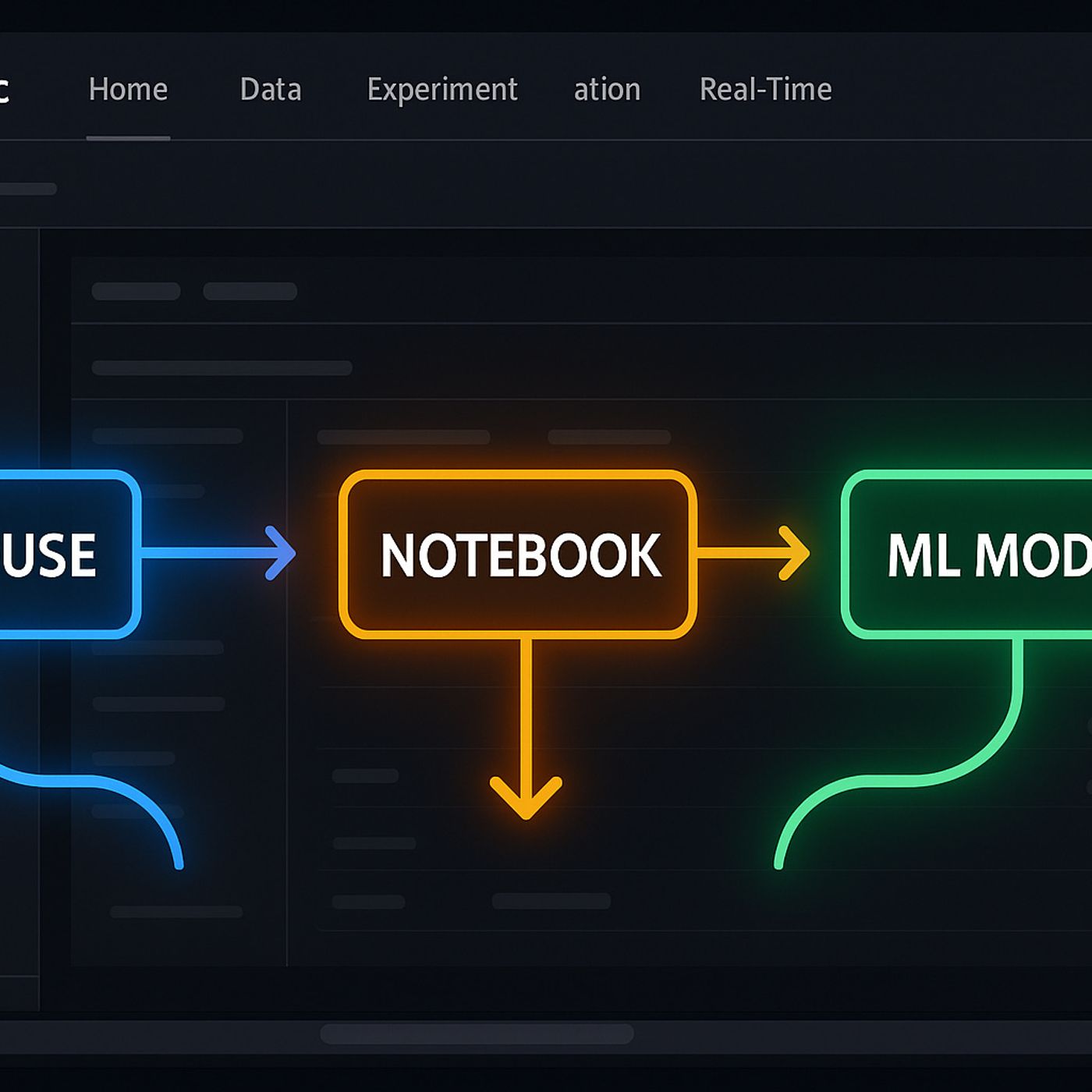

Machine Learning Models in Microsoft Fabric: How to Go from Lakehouse Data to Tracked, Deployable ML in One Workspace

Season 1

Published 10 months ago

Description

Most teams never get their machine learning models out of “notebook purgatory”—they live on laptops, depend on mystery environments, and fall apart the moment someone asks for a production version. In this episode, we start from that reality and walk through how Microsoft Fabric’s data science experience gives you one place to move from raw Lakehouse data to trained, tracked, and deployable models, without juggling exports, ad‑hoc clusters, or spreadsheet‑driven coordination.

We begin with the input problem: hunting data across lakes, files, and reports before you ever touch a model. You’ll hear why Fabric’s Lakehouse changes that equation by putting raw and curated tables in one governed workspace, so analysts and data scientists can pull sales, tickets, and inventory straight into notebooks without five different “final” CSVs and permission detours. That foundation means your features start from a shared, trusted source of truth instead of stitched‑together extracts.

From there, we dive into Python notebooks without the usual dependency drama. You’ll see how Fabric’s preconfigured environments, integrated Spark, and direct Lakehouse connections let you explore data, engineer features, and train models in one place—no custom clusters, no broken kernels, no “works only on my machine.” We talk through practical patterns for iterating quickly while still keeping code readable and reusable for the next person who picks up your work.

Finally, we connect modeling to operations. You’ll learn how Fabric’s built‑in tracking, versioning, and pipeline tooling help you move from experimental notebooks to repeatable training runs and deployable models that can feed reports, apps, or downstream services. By the end, “building ML models in Fabric” won’t just mean writing code—it will mean designing a workflow where data, experiments, and deployments all live in one system that your team can scale and audit over time.

WHAT YOU LEARN

The core insight of this episode is that Microsoft Fabric makes machine learning practical when you treat it as a systems problem, not just a modeling problem. When your data, notebooks, environments, and training pipelines all live in one governed workspace, you stop fighting file hunts and brittle setups and start focusing on models that can actually be trained, retrained, and shipped into real products.

We begin with the input problem: hunting data across lakes, files, and reports before you ever touch a model. You’ll hear why Fabric’s Lakehouse changes that equation by putting raw and curated tables in one governed workspace, so analysts and data scientists can pull sales, tickets, and inventory straight into notebooks without five different “final” CSVs and permission detours. That foundation means your features start from a shared, trusted source of truth instead of stitched‑together extracts.

From there, we dive into Python notebooks without the usual dependency drama. You’ll see how Fabric’s preconfigured environments, integrated Spark, and direct Lakehouse connections let you explore data, engineer features, and train models in one place—no custom clusters, no broken kernels, no “works only on my machine.” We talk through practical patterns for iterating quickly while still keeping code readable and reusable for the next person who picks up your work.

Finally, we connect modeling to operations. You’ll learn how Fabric’s built‑in tracking, versioning, and pipeline tooling help you move from experimental notebooks to repeatable training runs and deployable models that can feed reports, apps, or downstream services. By the end, “building ML models in Fabric” won’t just mean writing code—it will mean designing a workflow where data, experiments, and deployments all live in one system that your team can scale and audit over time.

WHAT YOU LEARN

- Why ML projects stall when data, notebooks, and environments are scattered across tools.

- How Fabric’s Lakehouse gives analysts and data scientists one governed place to source model inputs.

- How preconfigured Fabric notebooks with Spark simplify feature engineering and model training at scale.

- How tracking, versioning, and pipelines in Fabric turn one‑off experiments into repeatable training workflows.

- How to think about Fabric as a full ML workspace, not just “a notebook on top of a data lake.”

The core insight of this episode is that Microsoft Fabric makes machine learning practical when you treat it as a systems problem, not just a modeling problem. When your data, notebooks, environments, and training pipelines all live in one governed workspace, you stop fighting file hunts and brittle setups and start focusing on models that can actually be trained, retrained, and shipped into real products.