Episode Details

Back to Episodes

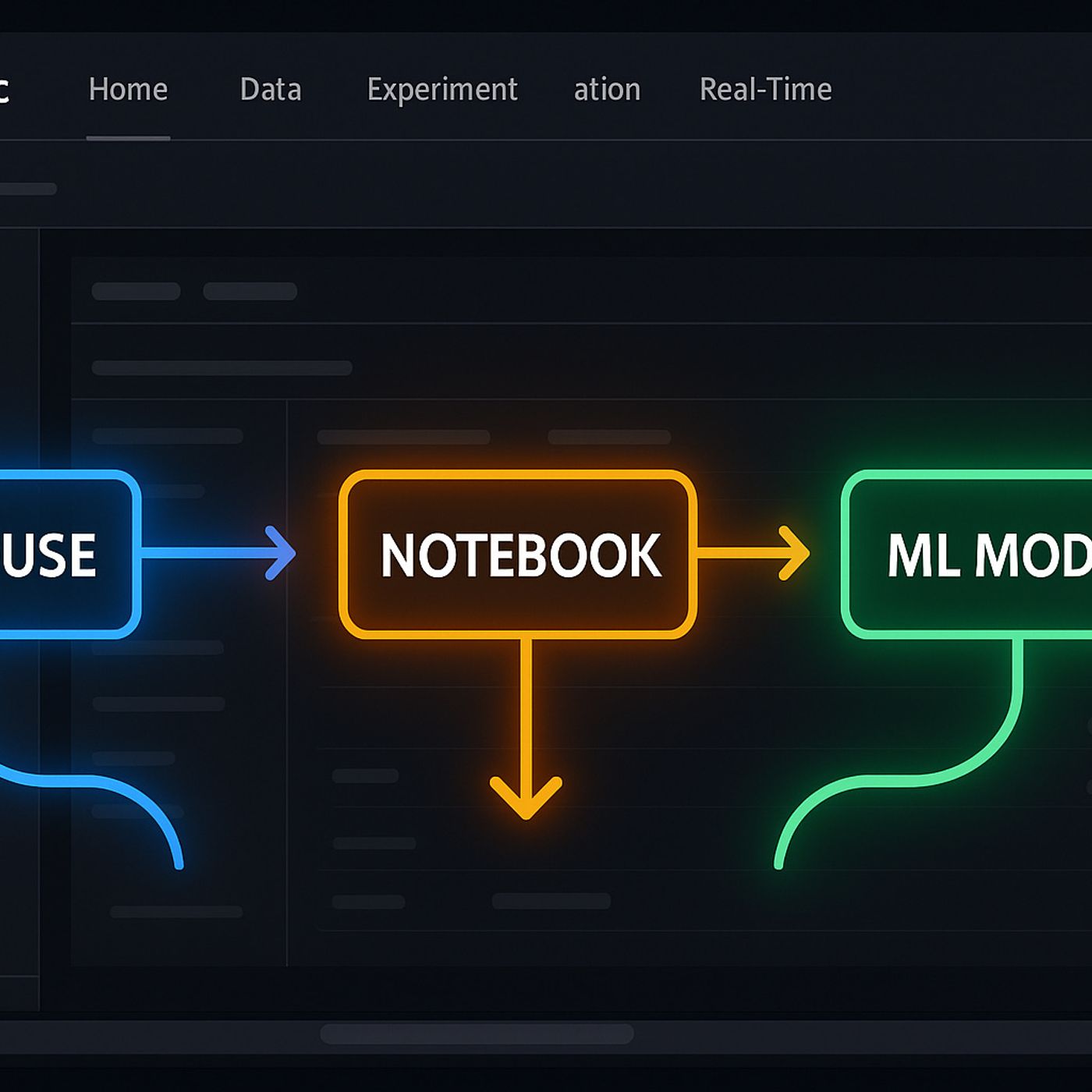

Building Machine Learning Models in Microsoft Fabric

Published 6 months, 3 weeks ago

Description

Ever wondered why your machine learning models just aren’t moving fast enough from laptop to production? You’re staring at data in your Lakehouse, notebooks are everywhere, and you’re still coordinating models on spreadsheets. What if there’s a way to finally connect all those dots—inside Microsoft Fabric? Stay with me. Today, we’ll walk through how Fabric’s data science workspace hands you a systems-level ML toolkit: real-time Lakehouse access, streamlined Python notebooks, and full-blown model tracking—all in one pane. Ready to see where the friction drops out?From Data Swamp to Lakehouse: Taming Input ChaosIf you’ve ever tried to start a machine learning project and felt like you were just chasing down files, you’re definitely not alone. This is the part nobody romanticizes—hunting through cloud shares, email chains, and legacy SQL databases, just to scrape enough rows together for a pass at training. The reality is, before you ever get to modeling, most of your time goes to what can only be described as custodial work. And yet, it’s the single biggest reason most teams never even make it out of the gate.Let’s not sugarcoat it: data is almost never where you want it. You end up stuck in the most tedious scavenger hunt you never signed up for, just to load up raw features. Exporting CSVs from one tool, connecting to different APIs in another, and then piecing everything together in Power BI—or, if you’re lucky, getting half the spreadsheet over email after some colleague remembered to hit “send.” By the time you think you’re ready for modeling, you’ve got the version nobody trusts and half a dozen lingering questions about what, exactly, that column “updated_date” really means.It’s supposed to be smoother with modern cloud platforms, right? But even after your data’s “in the cloud,” it somehow ends up scattered. Files sit in Data Lake Gen2, queries in Synapse, reports in Excel Online, and you’re toggling permissions on each, trying to keep track of where the truth lives. Every step creates a risk that something leaks out, gets accidentally overwritten, or just goes stale. Anyone who’s lost a few days to tracking down which environment is the real one knows—there’s a point where the tools themselves get in the way just as much as the bureaucracy.That’s not even the worst of it. The real showstopper is when it’s time to build actual features, and you realize you don’t have access to the columns you need. Maybe the support requests data is owned by a different team, or finance isn’t comfortable sharing transaction details except as redacted monthly summaries. So now, you’re juggling permissions and audit logs—one more layer of friction before you can even test an idea. It’s a problem that compounds fast. Each workaround, each exported copy, becomes a liability. That’s usually when someone jokes about “building a house with bricks delivered to five different cities,” and at this point, it barely sounds like a joke.Microsoft Fabric’s Lakehouse shakes that expectation up. Ignore the buzzword bingo for a minute—Lakehouse is less about catching up with trends and more about infrastructure actually working for you. Instead of twelve different data puddles, you’ve got one spot. Raw data lives alongside cleaned, curated tables, with structure and governance built in as part of the setup. For once, you don’t need a data engineer just to find your starting point. Even business analysts—not just your dev team with the right keys—are able to preview, analyze, and combine live data, all through the same central workspace.Picture this: a business analyst wants to compare live sales, recent support interactions, and inventory. They go into the Lakehouse workspace in Fabric, pull the current transactions over, blend in recent tickets, all while skipping the usual back-and-forth with IT. There are no frantic requests to unblock a folder or approve that last-minute API call. The analyst gets the view they need, on demand, and nothing has to be p