Episode Details

Back to Episodes

Language Agents: From Reasoning to Acting

Description

OpenAI DevDay is almost here! Per tradition, we are hosting a DevDay pregame event for everyone coming to town! Join us with demos and gossip!

Also sign up for related events across San Francisco: the AI DevTools Night, the xAI open house, the Replicate art show, the DevDay Watch Party (for non-attendees), Hack Night with OpenAI at Cloudflare. For everyone else, join the Latent Space Discord for our online watch party and find fellow AI Engineers in your city.

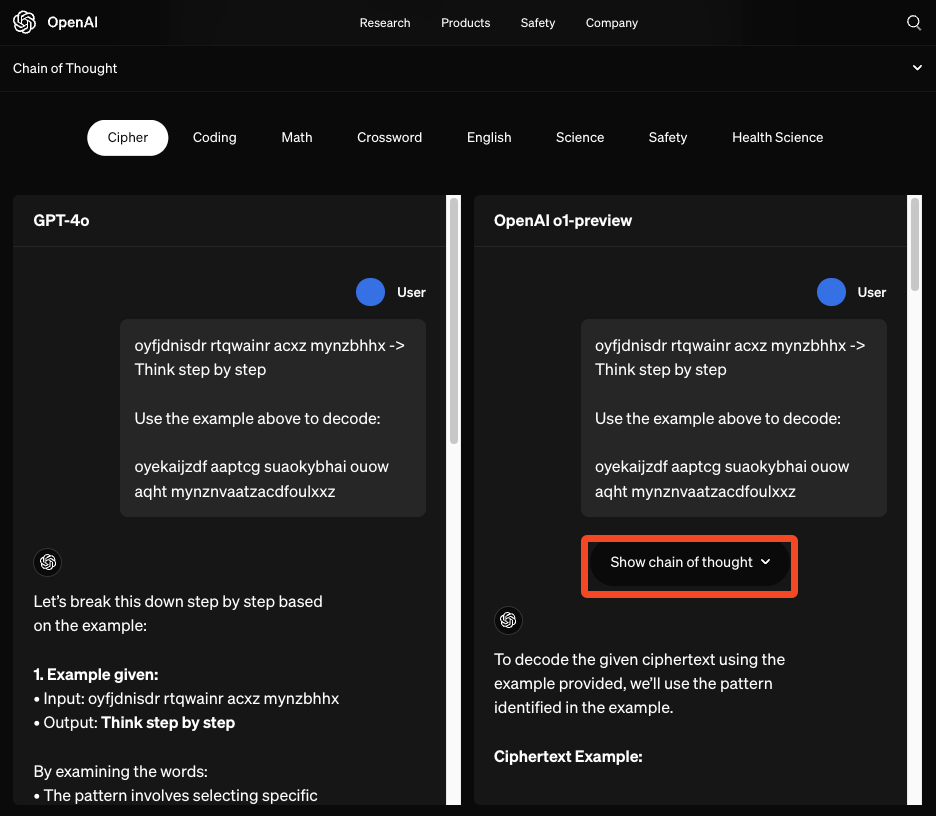

OpenAI’s recent o1 release (and Reflection 70b debacle) has reignited broad interest in agentic general reasoning and tree search methods.

While we have covered some of the self-taught reasoning literature on the Latent Space Paper Club, it is notable that the Eric Zelikman ended up at xAI, whereas OpenAI’s hiring of Noam Brown and now Shunyu suggests more interest in tool-using chain of thought/tree of thought/generator-verifier architectures for Level 3 Agents.

We were more than delighted to learn that Shunyu is a fellow Latent Space enjoyer, and invited him back (after his first appearance on our NeurIPS 2023 pod) for a look through his academic career with Harrison Chase (one year after his first LS show).

ReAct: Synergizing Reasoning and Acting in Language Models

Following seminal Chain of Thought papers from Wei et al and Kojima et al, and reflecting on lessons from building the WebShop human ecommerce trajectory benchmark, Shunyu’s first big hit, the ReAct paper showed that using LLMs to “generate both reasoning traces and task-specific actions in an interleaved manner” achieved remarkably greater performance (less hallucination/error propagation, higher ALFWorld/WebShop benchmark success) than CoT alone.

In even better news, ReAct scales fabulously with finetuning:

As a member of the elite Princeton NLP group, Shunyu was also a coauthor of the Reflexion paper, which we discuss in this pod.

Tree of Thoughts

Shunyu’s next major improvement on the CoT literature was Tree of Thoughts:

Language models are increasingly being deployed for ge