Episode Details

Back to Episodes

AI Magic: Shipping 1000s of successful products with no managers and a team of 12 — Jeremy Howard of Answer.ai

Description

Disclaimer: We recorded this episode ~1.5 months ago, timing for the FastHTML release. It then got bottlenecked by Llama3.1, Winds of AI Winter, and SAM2 episodes, so we’re a little late. Since then FastHTML was released, swyx is building an app in it for AINews, and Anthropic has also released their prompt caching API.

Remember when Dylan Patel of SemiAnalysis coined the GPU Rich vs GPU Poor war? (if not, see our pod with him). The idea was that if you’re GPU poor you shouldn’t waste your time trying to solve GPU rich problems (i.e. pre-training large models) and are better off working on fine-tuning, optimized inference, etc. Jeremy Howard (see our “End of Finetuning” episode to catchup on his background) and Eric Ries founded Answer.AI to do exactly that: “Practical AI R&D”, which is very in-line with the GPU poor needs. For example, one of their first releases was a system based on FSDP + QLoRA that let anyone train a 70B model on two NVIDIA 4090s. Since then, they have come out with a long list of super useful projects (in no particular order, and non-exhaustive):

* FSDP QDoRA: this is just as memory efficient and scalable as FSDP/QLoRA, and critically is also as accurate for continued pre-training as full weight training.

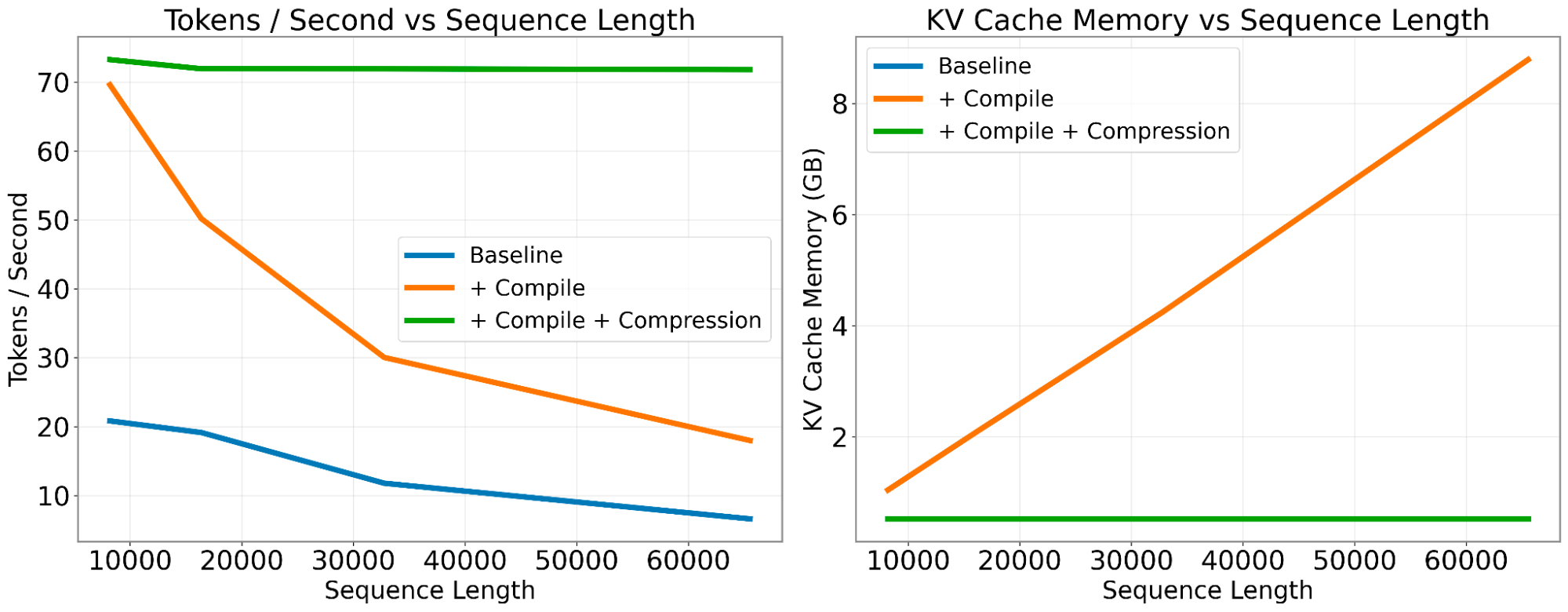

* Cold Compress: a KV cache compression toolkit that lets you scale sequence length without impacting speed.

* colbert-small: state of the art retriever at only 33M params

* JaColBERTv2.5: a new state-of-the-art retrievers on all Japanese benchmarks.

* gpu.cpp: portable GPU compute for C++ with WebGPU.

* Claudette: a better Anthropic API SDK.

They also recently released FastHTML, a new way to create modern interactive web apps. Jeremy recently released a 1 hour “Getting started” tutorial on YouTube; while this isn’t AI related per se, but it’s close to home for any AI Engineer who are looking to iterate quickly on new products:

In this episode we broke down 1) how they recruit 2) how they organize what to research 3) and how the