Episode Details

Back to Episodes

The Busy Person's Intro to Finetuning & Open Source AI - Wing Lian, Axolotl

Description

The Latent Space crew will be at NeurIPS on Tuesday! Reach out with any parties and papers of interest. We have also been incubating a smol daily AI Newsletter and Latent Space University is making progress.

Good open models like Llama 2 and Mistral 7B (which has just released an 8x7B MoE model) have enabled their own sub-industry of finetuned variants for a myriad of reasons:

* Ownership & Control - you take responsibility for serving the models

* Privacy - not having to send data to a third party vendor

* Customization - Improving some attribute (censorship, multiturn chat and chain of thought, roleplaying) or benchmark performance (without cheating)

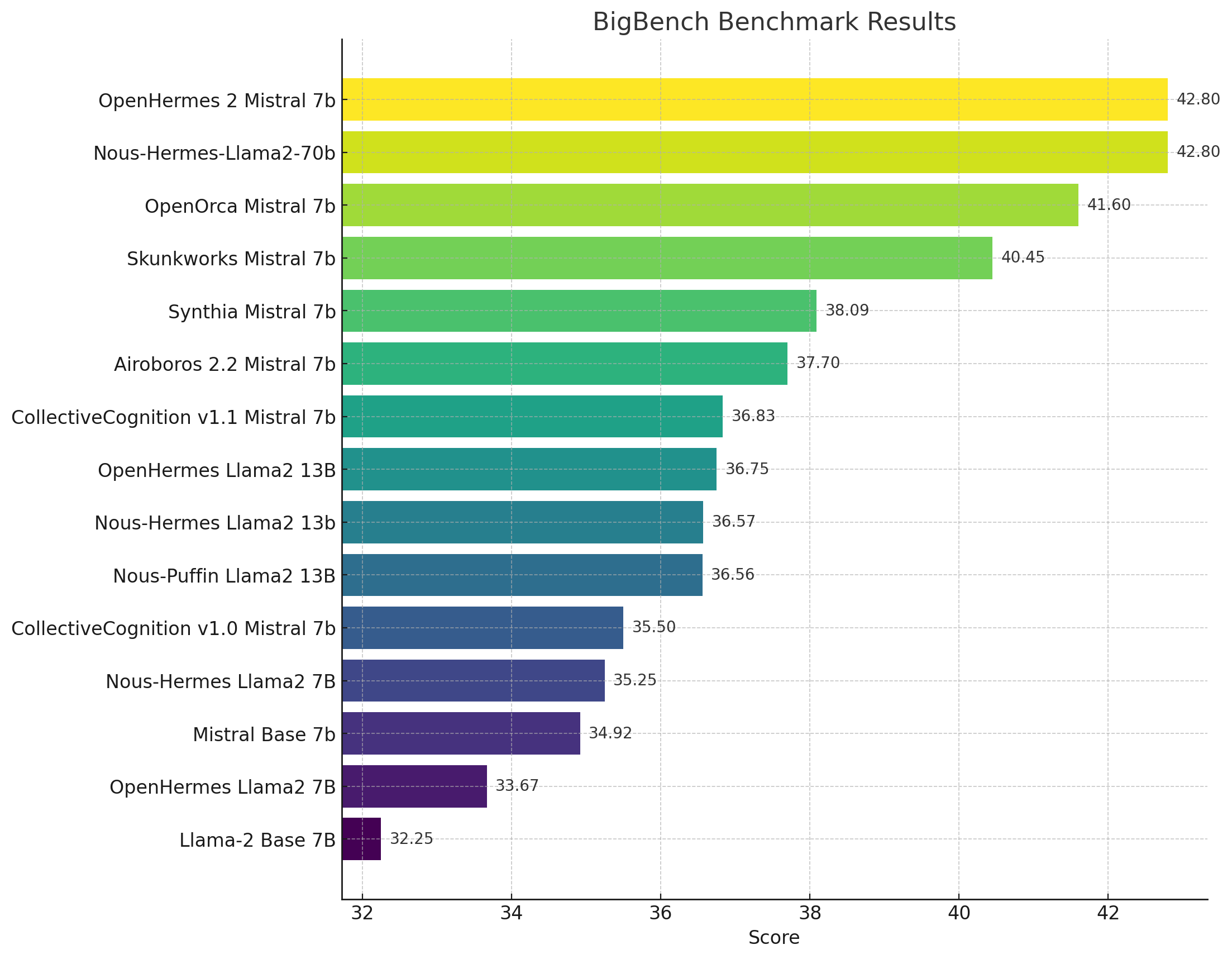

Related to improving benchmark performance is the ability to use smaller (7B, 13B) models, by matching the performance of larger models, which have both cost and inference latency benefits.

Core to all this work is finetuning, and the emergent finetuning library of choice has been Wing Lian’s Axolotl.

Axolotl

Axolotl is an LLM fine-tuner supporting SotA techniques and optimizations for a variety of common model architectures:

It is used by many of the leading open source models:

* Teknium: OpenHermes, Trismigestus, CollectiveCognition

* OpenOrca: Mistral-OpenOrca, Mistral-SlimOrca

* Nous Research: Puffin, Capybara, NousHermes

* Pygmalion: Mythalion, Pygmalion

* Eric Hartford: Dolphin, Samantha

* DiscoResearch: DiscoLM 120B & 70B

* OpenAccess AI Collective: Manticore, Minotaur, Jackalope, Hippogriff

As finetuning is very formatting dependent, it also provides prompt interfaces and formatters between a range of popular model formats from Stanford’s Alpaca and Steven Tey’s ShareGPT (which led to Vicuna) to the more NSFW Pygmalion community.

Nous Research Meetup

We last talked about Nous at the DevDay Recap at the e/acc “banger rave”. We met Wing at the Nous Research meetup at the a16z offices in San Francisco, where they officially announced their company and future plans:

Including Nous Forge:

Show Notes

We’ve already covered the nuances of Dataset Contamination and

Listen Now

Love PodBriefly?

If you like Podbriefly.com, please consider donating to support the ongoing development.

Support Us